You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The A.I Megathread (LLM , GPT , Development)

More options

Who Replied?

LM Studio - Local AI on your computer

Run local AI models like gpt-oss, Llama, Gemma, Qwen, and DeepSeek privately on your computer.

LM Studio

Discover, download, and run local LLMs

Supports MetaAI's Llama 2

Run any

LLaMa

Falcon

MPT

StarCoder

Replit

GPT-Neo-X

ggml ⓘmodels from Hugging Face

v0.2.6

Download LM Studio for Windows

v0.2.6

LM Studio is provided for personal use under the terms.

For business use, please get in touch.

Github

GithubWith LM Studio, you can ...

LM Studio supports any ggml Llama, MPT, and StarCoder model on Hugging Face (Llama 2, Orca, Vicuna, Nous Hermes, WizardCoder, MPT, etc.)

Minimum requirements: M1/M2 Mac, or a Windows PC with a processor that supports AVX2. Linux is under development.

Vishvanand Subramanian (@vishvanands) on Threads

Before ChatGPT, it took me days to write a non-trivial web scraper. Just used ChatGPT to write a pretty good scraper in 30 min. Great for quickly bypassing div name randomization, understanding schemas or layouts. Web Scraping is a relatively small industry ($500M) but don’t let that fool...

www.threads.net

www.threads.net

TL;DR: Almost like your feedforward networks, shown to be up to 220x faster at inference time (depending on width) thanks to the regionalization of the input space.

Paper: [2308.14711] Fast Feedforward Networks

GitHub: GitHub - pbelcak/fastfeedforward: A repository for log-time feedforward networks

PyPI: pip install fastfeedforward

Abstract:

Fast feedforward networks can be used anywhere where feedforward and mixture-of-experts networks are used, delivering a significant speedup.We break the linear link between the layer size and its inference cost by introducing the fast feedforward (FFF) architecture, a log-time alternative to feedforward networks. We demonstrate that FFFs are up to 220x faster than feedforward networks, up to 6x faster than mixture-of-experts networks, and exhibit better training properties than mixtures of experts thanks to noiseless conditional execution. Pushing FFFs to the limit, we show that they can use as little as 1% of layer neurons for inference in vision transformers while preserving 94.2% of predictive performance.

Paper: [2309.09400] CulturaX: A Cleaned, Enormous, and Multilingual Dataset for Large Language Models in 167 Languages

Hugging Face datasets: uonlp/CulturaX · Datasets at Hugging Face

Abstract:

The driving factors behind the development of large language models (LLMs) with impressive learning capabilities are their colossal model sizes and extensive training datasets. Along with the progress in natural language processing, LLMs have been frequently made accessible to the public to foster deeper investigation and applications. However, when it comes to training datasets for these LLMs, especially the recent state-of-the-art models, they are often not fully disclosed. Creating training data for high-performing LLMs involves extensive cleaning and deduplication to ensure the necessary level of quality. The lack of transparency for training data has thus hampered research on attributing and addressing hallucination and bias issues in LLMs, hindering replication efforts and further advancements in the community. These challenges become even more pronounced in multilingual learning scenarios, where the available multilingual text datasets are often inadequately collected and cleaned. Consequently, there is a lack of open-source and readily usable dataset to effectively train LLMs in multiple languages. To overcome this issue, we present CulturaX, a substantial multilingual dataset with 6.3 trillion tokens in 167 languages, tailored for LLM development. Our dataset undergoes meticulous cleaning and deduplication through a rigorous pipeline of multiple stages to accomplish the best quality for model training, including language identification, URL-based filtering, metric-based cleaning, document refinement, and data deduplication. CulturaX is fully released to the public in HuggingFace to facilitate research and advancements in multilingual LLMs: this https URL.

Mark Zuckerberg (@zuck) on Threads

Priscilla and I are optimistic about AI helping scientists to cure, prevent or manage all diseases this century. We're starting a new project at CZI to build a virtual cell to predict how every cell in the human body will behave -- healthy or diseased. To do this, we're building one of the...

www.threads.net

www.threads.net

.webp)

A catalogue of genetic mutations to help pinpoint the cause of diseases

We've released a catalogue of ‘missense’ mutations where researchers can learn more about what effect they may have. Missense variants are genetic mutations that can affect the function of human proteins. In some cases, they can lead to diseases such as cystic fibrosis, sickle-cell anaemia, or...

A catalogue of genetic mutations to help pinpoint the cause of diseases

September 19, 2023.webp)

New AI tool classifies the effects of 71 million ‘missense’ mutations

Uncovering the root causes of disease is one of the greatest challenges in human genetics. With millions of possible mutations and limited experimental data, it’s largely still a mystery which ones could give rise to disease. This knowledge is crucial to faster diagnosis and developing life-saving treatments.Today, we’re releasing a catalogue of ‘missense’ mutations where researchers can learn more about what effect they may have. Missense variants are genetic mutations that can affect the function of human proteins. In some cases, they can lead to diseases such as cystic fibrosis, sickle-cell anaemia, or cancer.

The AlphaMissense catalogue was developed using AlphaMissense, our new AI model which classifies missense variants. In a paper published in Science, we show it categorised 89% of all 71 million possible missense variants as either likely pathogenic or likely benign. By contrast, only 0.1% have been confirmed by human experts.

AI tools that can accurately predict the effect of variants have the power to accelerate research across fields from molecular biology to clinical and statistical genetics. Experiments to uncover disease-causing mutations are expensive and laborious – every protein is unique and each experiment has to be designed separately which can take months. By using AI predictions, researchers can get a preview of results for thousands of proteins at a time, which can help to prioritise resources and accelerate more complex studies.

We’ve made all of our predictions freely available to the research community and open sourced the model code for AlphaMissense.

AlphaMissense predicted the pathogenicity of all possible 71 million missense variants. It classified 89% – predicting 57% were likely benign and 32% were likely pathogenic.

What is a missense variant?

A missense variant is a single letter substitution in DNA that results in a different amino acid within a protein. If you think of DNA as a language, switching one letter can change a word and alter the meaning of a sentence altogether. In this case, a substitution changes which amino acid is translated, which can affect the function of a protein.The average person is carrying more than 9,000 missense variants. Most are benign and have little to no effect, but others are pathogenic and can severely disrupt protein function. Missense variants can be used in the diagnosis of rare genetic diseases, where a few or even a single missense variant may directly cause disease. They are also important for studying complex diseases, like type 2 diabetes, which can be caused by a combination of many different types of genetic changes.

Classifying missense variants is an important step in understanding which of these protein changes could give rise to disease. Of more than 4 million missense variants that have been seen already in humans, only 2% have been annotated as pathogenic or benign by experts, roughly 0.1% of all 71 million possible missense variants. The rest are considered ‘variants of unknown significance’ due to a lack of experimental or clinical data on their impact. With AlphaMissense we now have the clearest picture to date by classifying 89% of variants using a threshold that yielded 90% precision on a database of known disease variants.

Pathogenic or benign: How AlphaMissense classifies variants

AlphaMissense is based on our breakthrough model AlphaFold, which predicted structures for nearly all proteins known to science from their amino acid sequences. Our adapted model can predict the pathogenicity of missense variants altering individual amino acids of proteins.To train AlphaMissense, we fine-tuned AlphaFold on labels distinguishing variants seen in human and closely related primate populations. Variants commonly seen are treated as benign, and variants never seen are treated as pathogenic. AlphaMissense does not predict the change in protein structure upon mutation or other effects on protein stability. Instead, it leverages databases of related protein sequences and structural context of variants to produce a score between 0 and 1 approximately rating the likelihood of a variant being pathogenic. The continuous score allows users to choose a threshold for classifying variants as pathogenic or benign that matches their accuracy requirements.

An illustration of how AlphaMissense classifies human missense variants. A missense variant is input, and the AI system scores it as pathogenic or likely benign. AlphaMissense combines structural context and protein language modelling, and is fine-tuned on human and primate variant population frequency databases.

AlphaMissense achieves state-of-the-art predictions across a wide range of genetic and experimental benchmarks, all without explicitly training on such data. Our tool outperformed other computational methods when used to classify variants from ClinVar, a public archive of data on the relationship between human variants and disease. Our model was also the most accurate method for predicting results from the lab, which shows it is consistent with different ways of measuring pathogenicity.

AlphaMissense outperforms other computational methods on predicting missense variant effects.

Left: Comparing AlphaMissense and other methods’ performance on classifying variants from the Clinvar public archive. Methods shown in grey were trained directly on ClinVar and their performance on this benchmark are likely overestimated since some of their training variants are contained in this test set.

Right: Graph comparing AlphaMissense and other methods’ performance on predicting measurements from biological experiments.

Building a community resource

AlphaMissense builds on AlphaFold to further the world’s understanding of proteins. One year ago, we released 200 million protein structures predicted using AlphaFold – which is helping millions of scientists around the world to accelerate research and pave the way toward new discoveries. We look forward to seeing how AlphaMissense can help solve open questions at the heart of genomics and across biological science.We’ve made AlphaMissense’s predictions freely available to the scientific community. Together with EMBL-EBI, we are also making them more usable for researchers through the Ensembl Variant Effect Predictor.

In addition to our look-up table of missense mutations, we’ve shared the expanded predictions of all possible 216 million single amino acid sequence substitutions across more than 19,000 human proteins. We’ve also included the average prediction for each gene, which is similar to measuring a gene's evolutionary constraint – this indicates how essential the gene is for the organism’s survival.

Examples of AlphaMissense predictions overlaid on AlphaFold predicted structures (red=predicted as pathogenic, blue=predicted as benign, grey=uncertain). Red dots represent known pathogenic missense variants, blue dots represent known benign variants from the ClinVar database.

Left: HBB protein. Variants in this protein can cause sickle cell anaemia.

Right: CFTR protein. Variants in this protein can cause cystic fibrosis.

Accelerating research into genetic diseases

A key step in translating this research is collaborating with the scientific community. We have been working in partnership with Genomics England, to explore how these predictions could help study the genetics of rare diseases. Genomics England cross-referenced AlphaMissense’s findings with variant pathogenicity data previously aggregated with human participants. Their evaluation confirmed our predictions are accurate and consistent, providing another real-world benchmark for AlphaMissense.While our predictions are not designed to be used in the clinic directly – and should be interpreted with other sources of evidence – this work has the potential to improve the diagnosis of rare genetic disorders, and help discover new disease-causing genes.

Ultimately, we hope that AlphaMissense, together with other tools, will allow researchers to better understand diseases and develop new life-saving treatments.

Learn more about AlphaMissense:

Read our paper in Science

Download the Ensembl Variant Effect Predictor plugin

Download AlphaMissense code

'This is the last opportunity for us to wake up': A leading economist warns we're headed for an AI-driven cataclysm

How Daron Acemoglu, one of the world's most respected experts on the economic effects of technology, learned to start worrying and fear AI.

ISCOURSE ECONOMY

The AI heretic

How a leading economist learned to start worrying and fear artificial intelligence"This is the last opportunity for us to wake up," warns Daron Acemoglu. Simon Simard for Insider

Aki Ito

Sep 18, 2023, 6:00 AM EDT

Sure, there have been a few nutjobs out there who think AI will wipe out the human race. But ever since ChatGPT's explosive emergence last winter, the bigger concern for most of us has been whether these tools will soon write, code, analyze, brainstorm, compose, design, and illustrate us out of our jobs. To that, Silicon Valley and corporate America have been curiously united in their optimism. Yes, a few people might lose out, they say. But there's no need to panic. AI is going to make us more productive, and that will be great for society. Ultimately, technology always is.

As a reporter who's written about technology and the economy for years, I too subscribed to the prevailing optimism. After all, it was backed by a surprising consensus among economists, who normally can't agree on something as fundamental as what money is. For half a century, economists have worshiped technology as an unambiguous force for good. Normally, the "dismal science" argues, giving one person a bigger slice of the economic pie requires giving a smaller slice to the sucker next door. But technology, economists believed, was different. Invent the steam engine or the automobile or TikTok, and poof! Like magic, the pie gets bigger, allowing everyone to enjoy a bigger slice.

"Economists viewed technological change as this amazing thing," says Katya Klinova, the head of AI, labor, and the economy at the nonprofit Partnership on AI. "How much of it do we need? As much as possible. When? Yesterday. Where? Everywhere." To resist technology was to invite stagnation, poverty, darkness. Countless economic models, as well as all of modern history, seemed to prove a simple and irrefutable equation: technology = prosperity for everyone.

There's just one problem with that formulation: It's turning out to be wrong. And the economist who's doing the most to sound the alarm — the heretic who argues that the current trajectory of AI is far more likely to hurt us rather than help us — is perhaps the world's leading expert on technology's effects on the economy.

OX-DRAWN CARTS — Farming became more productive in medieval times, but the gains from new technology rarely benefited peasants. Universal History Archive/Universal Images Group via Getty Images

Daron Acemoglu, an economist at MIT, is so prolific and respected that he's long been viewed as a leading candidate for the Nobel prize in economics. He used to believe in the conventional wisdom, that technology is always a force for economic good. But now, with his longtime collaborator Simon Johnson, Acemoglu has written a 546-page treatise that demolishes the Church of Technology, demonstrating how innovation often winds up being harmful to society. In their book "Power and Progress," Acemoglu and Johnson showcase a series of major inventions over the course of the past 1,000 years that, contrary to what we've been told, did nothing to improve, and sometimes even worsened, the lives of most people. And in the periods when big technological breakthroughs did lead to widespread good — the examples that today's AI optimists cite — it was only because ruling elites were forced to share the gains of innovation widely, rather than keeping the profits and power for themselves. It was the fight over technology, not just technology on its own, that wound up benefiting society.

"The broad-based prosperity of the past was not the result of any automatic, guaranteed gains of technological progress," Acemoglu and Johnson write. "We are beneficiaries of progress, mainly because our predecessors made the progress work for more people."

Today, in this moment of peak AI, which path are we on? The terrific one, where we all benefit from these new tools? Or the terrible one, where most of us lose out? Over the course of three conversations this summer, Acemoglu told me he's worried we're currently hurtling down a road that will end in catastrophe. All around him, he sees a torrent of warning signs — the kind that, in the past, wound up favoring the few over the many. Power concentrated in the hands of a handful of tech behemoths. Technologists, bosses, and researchers focused on replacing human workers instead of empowering them. An obsession with worker surveillance. Record-low unionization. Weakened democracies. What Acemoglu's research shows — what history tells us — is that tech-driven dystopias aren't some sci-fi rarity. They're actually far more common than anyone has realized.

"There's a fair likelihood that if we don't do a course correction, we're going to have a truly two-tier system," Acemoglu told me. "A small number of people are going to be on top — they're going to design and use those technologies — and a very large number of people will only have marginal jobs, or not very meaningful jobs." The result, he fears, is a future of lower wages for most of us.

Acemoglu shares these dire warnings not to urge workers to resist AI altogether, nor to resign us to counting down the years to our economic doom. He sees the possibility of a beneficial outcome for AI — "the technology we have in our hands has all the capabilities to bring lots of good" — but only if workers, policymakers, researchers, and maybe even a few high-minded tech moguls make it so. Given how rapidly ChatGPT has spread throughout the workplace — 81% of large companies in one survey said they're already using AI to replace repetitive work — Acemoglu is urging society to act quickly. And his first task is a steep one: deprogramming all of us from what he calls the "blind techno-optimism" espoused by the "modern oligarchy."

"This," he told me, "is the last opportunity for us to wake up."

THE SPINNING JENNY — New textile machines in the 18th and 19th centuries drove many skilled workers into lower-wage jobs. Hulton Archive/Getty Images/Getty Images

Acemoglu, 56, lives with his wife and two sons in a quiet, affluent suburb of Boston. But he was born 5,000 miles away in Istanbul, to a country mired in chaos. When he was 3, the military seized control of the government and his father, a left-leaning professor who feared the family's home would be raided, burned his books. The economy crumbled under the weight of triple-digit inflation, crushing debt, and high unemployment. When Acemoglu was 13, the military detained and tried hundreds of thousands of people, torturing and executing many. Watching the violence and poverty all around him, Acemoglu started to wonder about the relationship between dictatorships and economic growth — a question he wouldn't be able to study freely if he stayed in Turkey. At 19, he left to attend college in the UK. By the freakishly young age of 25, he completed his doctorate in economics at the London School of Economics.

Moving to Boston to teach at MIT, Acemoglu was quick to make waves in his chosen field. To this day his most cited paper, written with Johnson and another longtime collaborator, James Robinson, tackles the question he pondered as a teenager: Do democratic countries develop better economies than dictatorships? It's a huge question — one that's hard to answer, because it could be that poverty leads to dictatorship, not the other way around. So Acemoglu and his coauthors employed a clever workaround. They looked at European colonies with high mortality rates, where history showed that power remained concentrated in the hands of the few settlers willing to brave death and disease, versus colonies with low mortality rates, where a larger influx of settlers pushed for property rights and political rights that checked the power of the state. The conclusion: Colonies that developed what they came to call "inclusive" institutions — ones that encouraged investment and enforced the rule of law — ended up richer than their authoritarian neighbors. In their ambitious and sprawling book, "Why Nations Fail," Acemoglu and Robinson rejected factors like culture, weather, and geography as things that made some countries rich and others poor. The only factor that really mattered was democracy.

The book was an unexpected bestseller, and economists hailed it as paradigm-shifting. But Acemoglu was also pursuing a different line of research that had long fascinated him: technological progress. Like almost all of his colleagues, he started off as an unabashed techno-optimist. In 2008, he published a textbook for graduate students that endorsed the technology-is-always-good orthodoxy. "I was following the canon of economic models, and in all of these models, technological change is the main mover of GDP per capita and wages," Acemoglu told me. "I did not question them."

But as he thought about it more, he started to wonder whether there might be more to the story. The first turning point came in a paper he worked on with the economist David Autor. In it was a striking chart that plotted the earnings of American men over five decades, adjusted for inflation. During the 1960s and early 1970s, everyone's wages rose in tandem, regardless of education. But then, around 1980, the wages of those with advanced degrees began to soar, while the wages of high-school graduates and dropouts plunged. Something was making the lives of less-educated Americans demonstrably worse. Was that something technology?

LOCOMOTIVES AND LIGHT BULBS — Stagecoach drivers and lamplighters were thrown out of work — but in the latter stages of the Industrial Revolution, these new technologies created vast numbers of high-paying jobs. Universal History Archive/Getty Images

Acemoglu had a hunch that it was. With Pascual Restrepo, one of his students at the time, he started thinking of automation as something that does two opposite things simultaneously: It steals tasks from humans, while also creating new tasks for humans. How workers ultimately fare, he and Restrepo theorized, depends in large part on the balance of those two actions. When the newly created tasks offset the stolen tasks, workers do fine: They can shuffle into new jobs that often pay better than their old ones. But when the stolen tasks outpace the new ones, displaced workers have nowhere to go. In later empirical work, Acemoglu and Restrepo showed that that was exactly what had happened. Over the four decades following World War II, the two kinds of tasks balanced each other out. But over the next three decades, stolen tasks outpaced the new tasks by a wide margin. In short, automation went both ways. Sometimes it was good, and sometimes it was bad.

It was the bad part that economists were still unconvinced about. So Acemoglu and Restrepo, casting around for more empirical evidence, zeroed in on robots. What they found was stunning: Since 1990, the introduction of every additional robot reduced employment by approximately six humans, while measurably lowering wages. "That was an eye-opener," Acemoglu told me. "People thought it would not be possible to have such negative effects from robots."

Many economists, clinging to the technological orthodoxy, dismissed the effects of robots on human workers as a "transitory phenomenon." In the end, they insisted, technology would prove to be good for everyone. But Acemoglu found that viewpoint unsatisfying. Could you really call something that had been going on for three or four decades "transitory"? By his calculations, robots had thrown more than half a million Americans out of work. Perhaps, in the long run, the benefits of technology would eventually reach most people. But as the economist John Maynard Keynes once quipped, in the long run, we're all dead.

Robots, Acemoglu found, destroyed jobs and lowered wages. "That was an eye-opener," he says. "People thought it would not be possible to have such negative effects from robots." Simon Simard for Insider

So Acemoglu set out to study the long run. First, he and Johnson scoured the course of Western history to see whether there were other times when technology failed to deliver on its promise. Was the recent era of automation, as many economists assumed, an anomaly?

It wasn't, Acemoglu and Johnson found. Take, for instance, medieval times, a period commonly dismissed as a technological wasteland. But the Middle Ages actually saw a series of innovations that included heavy wheeled plows, mechanical clocks, spinning wheels, smarter crop-rotation techniques, the widespread adoption of wheelbarrows, and a greater use of horses. These advancements made farming much more productive. But the reason we remember the period as the Dark Ages is precisely because the gains never reached the peasants who were doing the actual work. Despite all the technological advances, they toiled for longer hours, grew increasingly malnourished, and most likely lived shorter lives. The surpluses created by the new technology went almost exclusively to the elites who sat at the top of society: the clergy, who used their newfound wealth to build soaring cathedrals and consolidate their power.

Or consider the Industrial Revolution, which techno-optimists gleefully point to as Exhibit A of the invariable benefit of innovation. The first, long stretch of the Industrial Revolution was actually disastrous for workers. Technology that mechanized spinning and weaving destroyed the livelihoods of skilled artisans, handing textile jobs to unskilled women and children who commanded lower wages and virtually no bargaining power. People crowding into the cities for factory jobs lived next to cesspools of human waste, breathed coal-polluted air, and were defenseless against epidemics like cholera and tuberculosis that wiped out their families. They were also forced to work longer hours while real incomes stagnated. "I have traversed the seat of war in the peninsula," Lord Byron lamented to the House of Lords in 1812. "I have been in some of the most oppressed provinces of Turkey; but never, under the most despotic of infidel governments, did I behold such squalid wretchedness as I have seen since my return, in the very heart of a Christian country."

If the average person didn't benefit, where did all the extra wealth generated by the new machines go? Once again, it was hoarded by the elites: the industrialists. "Normally, technology gets co-opted and controlled by a pretty small number of people who use it primarily to their own benefits," Johnson told me. "That is the big lesson from human history."

THE AUTOMOBILE— Assembly-line production created lots of blue-collar jobs, as well as new positions in engineering and management. Eric Van Den Brulle/Getty Images

Acemoglu and Johnson recognized that technology hasn't always been bad: At times, they found, it's been nothing short of miraculous. In England, during the second phase of the Industrial Revolution, real wages soared by 123%. The average working day declined to nine hours, child labor was curbed, and life expectancy rose. In the United States, during the postwar boom from 1949 to 1973, real wages grew by almost 3% a year, creating a vibrant and stable middle class. "There has never been, as far as anyone knows, another epoch of such rapid and shared prosperity," Acemoglu and Johnson write, going all the way back to the Ancient Greeks and Romans. It's episodes like these that made economists believe so fervently in the power of technology.

So what separates the good technological times from the bad? That's the central question that Acemoglu and Johnson tackle in "Power and Progress." Two factors, they say, determine the outcome of a new technology. The first is the nature of the technology itself — whether it creates enough new tasks for workers to offset the tasks it takes away. The first phase of the Industrial Revolution, they argue, was dominated by textile machines that replaced skilled spinners and weavers without creating enough new work for them to pursue, condemning them to unskilled gigs with lower wages and worse conditions. In the second phase of the Industrial Revolution, by contrast, steam-powered locomotives displaced stagecoach drivers — but they also created a host of new jobs for engineers, construction workers, ticket sellers, porters, and the managers who supervised them all. These were often highly skilled and highly paid jobs. And by lowering the cost of transportation, the steam engine also helped expand sectors like the metal-smelting industry and retail trade, creating jobs in those areas as well.

"What's special about AI is its speed," Acemoglu says. "It's much faster than past technologies. It's pervasive. It's going to be applied pretty much in every sector. And it's very flexible."

The second factor that determines the outcome of new technologies is the prevailing balance of power between workers and their employers. Without enough bargaining power, Acemoglu and Johnson argue, workers are unable to force their bosses to share the wealth that new technologies generate. And what determines the degree of bargaining power is closely related to democracy. Electoral reforms — kickstarted by the working-class Chartist movement in 1830s Britain — were central to the Industrial Revolution transforming from bad to good. As more men won the right to vote, Parliament became more responsive to the needs of the broader public, passing laws to improve sanitation, crack down on child labor, and legalize trade unions. The growth of organized labor, in turn, laid the groundwork for workers to extract higher wages and better working conditions from their employers in the wake of technological innovations, along with guarantees of retraining when new machines took over their old jobs.

In normal times, such insights might feel purely academic — just another debate over how to interpret the past. But there's one point that both Acemoglu and the tech elite he criticizes agree on: We're in the midst of another technological revolution today with AI. "What's special about AI is its speed," Acemoglu told me. "It's much faster than past technologies. It's pervasive. It's going to be applied pretty much in every sector. And it's very flexible. All of this means that what we're doing right now with AI may not be the right thing — and if it's not the right thing, if it's a damaging direction, it can spread very fast and become dominant. So I think those are big stakes."

Acemoglu acknowledges that his views remain far from the consensus in his profession. But there are indications that his thinking is starting to have a broader impact in the emerging battle over AI. In June, Gita Gopinath, who is second in command at the International Monetary Fund, gave a speech urging the world to regulate AI in a way that would benefit society, citing Acemoglu by name. Klinova, at the Partnership on AI, told me that people high up at the leading AI labs are reading and discussing his work. And Paul Romer, who won the Nobel prize in 2018 for work that showed just how critical innovation is for economic growth, says he's gone through his own change in thinking that mirrors Acemoglu's.

"It was wishful thinking by economists, including me, who wanted to believe that things would naturally turn out well," Romer told me. "What's become more and more clear to me is that that's just not a given. It's blindingly obvious, ex post facto, that there are many forms of technology that can do great harm, and also many forms that can be enormously beneficial. The trick is to have some entity that acts on behalf of society as a whole that says: Let's do the ones that are beneficial, let's not do the ones that are harmful."

Romer praises Acemoglu for challenging the conventional wisdom. "I really admire him, because it's easy to be afraid of getting too far outside the consensus," he says. "Daron is courageous for being willing to try new ideas and pursue them without trying to figure out, where's the crowd? There's too much herding around a narrow set of possible views, and we've really got to keep open to exploring other possibilities."

Early this year, a few weeks before the rest of us, a research initiative organized by Microsoft gave Acemoglu early access to GPT-4. As he played around with it, he was amazed by the responses he got from the bot. "Every time I had a conversation with GPT-4 I was so impressed that at the end I said, 'Thank you,'" he says, laughing. "It's certainly beyond what I would have thought would be feasible a year ago. I think it shows great potential in doing a bunch of things."

But the early experimentation with AI also introduced him to its shortcomings. He doesn't think we're anywhere close to the point where software will be able to do everything humans can — a state that computer scientists call artificial general intelligence. As a result, he and Johnson don't foresee a future of mass unemployment. People will still be working, but at lower wages. "What we're concerned about is that the skills of large numbers of workers will be much less valuable," he told me. "So their incomes will not keep up."

Acemoglu's interest in AI predates the explosion of ChatGPT by many years. That's in part thanks to his wife, Asu Ozdaglar, who heads the electrical engineering and computer science department at MIT. Through her, he received an early education in machine learning, which was making it possible for computers to complete a wider range of tasks. As he dug deeper into automation, he began to wonder about its effects not just on factory jobs, but on office workers. "Robots are important, but how many blue-collar workers do we have left?" he told me. "If you have a technology that automates knowledge work, white-collar work, clerical work, that's going to be much more important for this next stage of automation."

In theory, it's possible that automation will end up being a net good for white-collar workers. But right now, Acemoglu is worried it will end up being a net bad, because society currently doesn't display the conditions necessary to ensure that new technologies benefit everyone. First, thanks to a decadeslong assault on organized labor, only 10% of the working population is unionized — a record low. Without bargaining power, workers won't get a say in how AI tools are implemented on the job, or who shares in the wealth they create. And second, years of misinformation have weakened democratic institutions — a trend that's likely to get worse in the age of deep fakes.

Moreover, Acemoglu is worried that AI isn't creating enough new tasks to offset the ones it's taking away. In a recent study, he found that the companies that hired more AI specialists over the past decade went on to hire fewer people overall. That suggests that even before the ChatGPT era, employers were using AI to replace their human workers with software, rather than using it to make humans more productive – just as they had with earlier forms of digital technologies. Companies, of course, are always eager to trim costs and goose short-term profits. But Acemoglu also blames the field of AI research for the emphasis on replacing workers. Computer scientists, he notes, judge their AI creations by seeing whether their programs can achieve "human parity" — completing certain tasks as well as people.

"It's become second nature to people in the industry and in the broader ecosystem to judge these new technologies in how well they do in being humanlike," he told me. "That creates a very natural pathway to automation and replicating what humans do — and often not enough in how they can be most useful for humans with very different skills" than computers.

COMPUTERS AND ROBOTS — New technologies have destroyed factory and clerical jobs in recent decades, gutting the middle class. Mark Madeo/Future via Getty Image; Issarawat Tattong/Getty Images

Acemoglu argues that building tools that are useful to human workers, instead of tools that will replace them, would benefit not only workers but their employers as well. Why focus so much energy on doing something humans can already do reasonably well, when AI could instead help us do what we never could before? It's a message that Erik Brynjolfsson, another prominent economist studying technological change, has been pushing for a decade now. "It would have been lame if someone had set out to make a car with feet and legs that was humanlike," Brynjolfsson told me. "That would have been a pretty slow-moving car." Building AI with the goal of imitating humans similarly fails to realize the true potential of the technology.

"The future is going to be largely about knowledge work," Acemoglu says. "Generative AI could be one of the tools that make workers much more productive. That's a great promise. There's a high road here where you can actually increase productivity, make profits, as well as contribute to social good — if you find a way to use this technology as a tool that empowers workers."

In March, Acemoglu signed a controversial open letter calling on AI labs to pause the training of their systems for at least six months. He didn't think companies would adopt the moratorium, and he disagreed with the letter's emphasis on the existential risk that AI poses to humanity. But he joined the list of more than a thousand other signatories anyway — a group that included the AI scientist Yoshua Bengio, the historian Yuval Noah Harari, the former presidential candidate Andrew Yang, and, strangely, Elon Musk. "I thought it was remarkable in bringing together an amazing cross-section of very different people who were articulating concerns about the direction of tech," Acemoglu told me. "High-profile efforts to say, 'Look, there might be something wrong with the direction of change, and we should take a look and think about regulation' — that's important."

When society is ready to start talking about specific ways to ensure that AI leads to shared prosperity, Acemoglu and Johnson devote an entire chapter at the end of their book to what they view as promising solutions. Among them: Taxing wages less, and software more, so companies won't be incentivized to replace their workers with technology. Fostering new organizations that advocate the needs of workers in the age of AI, the way Greenpeace pushes for climate activism. Repealing Section 230 of the Communications Decency Act, to force internet companies to stop promoting the kind of misinformation that hurts the democratic process. Creating federal subsidies for technology that complements workers instead of replacing them. And, most broadly, breaking up Big Tech to foster greater competition and innovation.

Economists — at least the ones who aren't die-hard conservatives — don't object in general to Acemoglu's proposals to increase the bargaining power of workers. But many struggle with the idea of trying to steer AI research and implementation in a direction that's beneficial for workers. Some question whether it's even possible to predict which technologies will create enough new tasks to offset the ones they replace. But in my private conversations with economists, I've also sensed an underlying discomfort at the prospect of messing with how technology unfolds in the marketplace. Since 1800, when the Industrial Revolution was first taking hold in the US, GDP per capita — the most common measure of living standards — has grown more than twentyfold. The invisible hand of technology, most economists continue to believe, will ultimately benefit everyone, if left to its own devices.

I used to think that way, too. A decade ago, when I first began reporting on the likely effects of machine learning, the consensus was that careers like mine — ones that require a significant measure of creativity and social intelligence — were still safe. In recent months, even as it became clear how well ChatGPT can write, I kept reassuring myself with the conventional wisdom. AI is going to make us more productive, and that will be great for society. Now, after reviewing Acemoglu's research, I've been hearing a new mantra in my head: We're all fukked.

That's not the takeaway Acemoglu intended. In our conversations, he told me over and over that we're not powerless in the face of the dystopian future he foresees — that we have the ability to steer the way AI unfolds. Yes, that will require passing a laundry list of huge policies, in the face of a tech lobby with unlimited resources, through a dysfunctional Congress and a deeply pro-business Supreme Court, amid a public fed a digital firehose of increasingly brazen lies. And yes, there are days when he doesn't feel all that great about our chances either.

"I realize this is a very, very tall order," Acemoglu told me. But you know whose chances looked even grimmer? Workers in England during the mid-19th century, who endured almost 100 years of a tech-driven dystopia. At the time, few had the right to vote, let alone to unionize. The Chartists who demanded universal male suffrage were jailed. The Luddites who broke the textile machines that displaced them were exiled to Australia or hanged. And yet they recognized that they deserved more, and they fought for the kinds of rights that translated into higher wages and a better life for them and, two centuries later, for us. Had they not bothered, the march of technology would have turned out very differently.

"We have greatly benefited from technology, but there's nothing automatic about that," Acemoglu told me. "It could have gone in a very bad direction had it not been for institutional, regulatory, and technological adjustments. That's why this is a momentous period: because there are similar choices that need to be made today. The conclusion to be drawn is not that technology is workers' enemy. It's that we need to make sure we end up with directions of technology that are more conducive to wage growth and shared prosperity." That's why Acemoglu dedicated "Power and Progress" not only to his wife but to his two sons. History may point to how destructive AI is likely to be. But it doesn't have to repeat itself.

"Our book is an analysis," he told me. "But it also encourages people to be involved for a better future. I wrote it for the next generation, with the hope that it will get better."

In theory, it's possible that automation will end up being a net good for white-collar workers. But right now, Acemoglu is worried it will end up being a net bad, because society currently doesn't display the conditions necessary to ensure that new technologies benefit everyone. First, thanks to a decadeslong assault on organized labor, only 10% of the working population is unionized — a record low. Without bargaining power, workers won't get a say in how AI tools are implemented on the job, or who shares in the wealth they create. And second, years of misinformation have weakened democratic institutions — a trend that's likely to get worse in the age of deep fakes.

Moreover, Acemoglu is worried that AI isn't creating enough new tasks to offset the ones it's taking away. In a recent study, he found that the companies that hired more AI specialists over the past decade went on to hire fewer people overall. That suggests that even before the ChatGPT era, employers were using AI to replace their human workers with software, rather than using it to make humans more productive – just as they had with earlier forms of digital technologies. Companies, of course, are always eager to trim costs and goose short-term profits. But Acemoglu also blames the field of AI research for the emphasis on replacing workers. Computer scientists, he notes, judge their AI creations by seeing whether their programs can achieve "human parity" — completing certain tasks as well as people.

"It's become second nature to people in the industry and in the broader ecosystem to judge these new technologies in how well they do in being humanlike," he told me. "That creates a very natural pathway to automation and replicating what humans do — and often not enough in how they can be most useful for humans with very different skills" than computers.

COMPUTERS AND ROBOTS — New technologies have destroyed factory and clerical jobs in recent decades, gutting the middle class. Mark Madeo/Future via Getty Image; Issarawat Tattong/Getty Images

Acemoglu argues that building tools that are useful to human workers, instead of tools that will replace them, would benefit not only workers but their employers as well. Why focus so much energy on doing something humans can already do reasonably well, when AI could instead help us do what we never could before? It's a message that Erik Brynjolfsson, another prominent economist studying technological change, has been pushing for a decade now. "It would have been lame if someone had set out to make a car with feet and legs that was humanlike," Brynjolfsson told me. "That would have been a pretty slow-moving car." Building AI with the goal of imitating humans similarly fails to realize the true potential of the technology.

"The future is going to be largely about knowledge work," Acemoglu says. "Generative AI could be one of the tools that make workers much more productive. That's a great promise. There's a high road here where you can actually increase productivity, make profits, as well as contribute to social good — if you find a way to use this technology as a tool that empowers workers."

In March, Acemoglu signed a controversial open letter calling on AI labs to pause the training of their systems for at least six months. He didn't think companies would adopt the moratorium, and he disagreed with the letter's emphasis on the existential risk that AI poses to humanity. But he joined the list of more than a thousand other signatories anyway — a group that included the AI scientist Yoshua Bengio, the historian Yuval Noah Harari, the former presidential candidate Andrew Yang, and, strangely, Elon Musk. "I thought it was remarkable in bringing together an amazing cross-section of very different people who were articulating concerns about the direction of tech," Acemoglu told me. "High-profile efforts to say, 'Look, there might be something wrong with the direction of change, and we should take a look and think about regulation' — that's important."

When society is ready to start talking about specific ways to ensure that AI leads to shared prosperity, Acemoglu and Johnson devote an entire chapter at the end of their book to what they view as promising solutions. Among them: Taxing wages less, and software more, so companies won't be incentivized to replace their workers with technology. Fostering new organizations that advocate the needs of workers in the age of AI, the way Greenpeace pushes for climate activism. Repealing Section 230 of the Communications Decency Act, to force internet companies to stop promoting the kind of misinformation that hurts the democratic process. Creating federal subsidies for technology that complements workers instead of replacing them. And, most broadly, breaking up Big Tech to foster greater competition and innovation.

I sensed an underlying discomfort among economists at the prospect of messing with how technology unfolds in the marketplace.

Economists — at least the ones who aren't die-hard conservatives — don't object in general to Acemoglu's proposals to increase the bargaining power of workers. But many struggle with the idea of trying to steer AI research and implementation in a direction that's beneficial for workers. Some question whether it's even possible to predict which technologies will create enough new tasks to offset the ones they replace. But in my private conversations with economists, I've also sensed an underlying discomfort at the prospect of messing with how technology unfolds in the marketplace. Since 1800, when the Industrial Revolution was first taking hold in the US, GDP per capita — the most common measure of living standards — has grown more than twentyfold. The invisible hand of technology, most economists continue to believe, will ultimately benefit everyone, if left to its own devices.

I used to think that way, too. A decade ago, when I first began reporting on the likely effects of machine learning, the consensus was that careers like mine — ones that require a significant measure of creativity and social intelligence — were still safe. In recent months, even as it became clear how well ChatGPT can write, I kept reassuring myself with the conventional wisdom. AI is going to make us more productive, and that will be great for society. Now, after reviewing Acemoglu's research, I've been hearing a new mantra in my head: We're all fukked.

That's not the takeaway Acemoglu intended. In our conversations, he told me over and over that we're not powerless in the face of the dystopian future he foresees — that we have the ability to steer the way AI unfolds. Yes, that will require passing a laundry list of huge policies, in the face of a tech lobby with unlimited resources, through a dysfunctional Congress and a deeply pro-business Supreme Court, amid a public fed a digital firehose of increasingly brazen lies. And yes, there are days when he doesn't feel all that great about our chances either.

"I realize this is a very, very tall order," Acemoglu told me. But you know whose chances looked even grimmer? Workers in England during the mid-19th century, who endured almost 100 years of a tech-driven dystopia. At the time, few had the right to vote, let alone to unionize. The Chartists who demanded universal male suffrage were jailed. The Luddites who broke the textile machines that displaced them were exiled to Australia or hanged. And yet they recognized that they deserved more, and they fought for the kinds of rights that translated into higher wages and a better life for them and, two centuries later, for us. Had they not bothered, the march of technology would have turned out very differently.

"We have greatly benefited from technology, but there's nothing automatic about that," Acemoglu told me. "It could have gone in a very bad direction had it not been for institutional, regulatory, and technological adjustments. That's why this is a momentous period: because there are similar choices that need to be made today. The conclusion to be drawn is not that technology is workers' enemy. It's that we need to make sure we end up with directions of technology that are more conducive to wage growth and shared prosperity." That's why Acemoglu dedicated "Power and Progress" not only to his wife but to his two sons. History may point to how destructive AI is likely to be. But it doesn't have to repeat itself.

"Our book is an analysis," he told me. "But it also encourages people to be involved for a better future. I wrote it for the next generation, with the hope that it will get better."

It's been only 7 months since ChatGPT launched yet so much has happened in AI.

Here are ALL the major developments you NEED to know:

GENERATIVE AI

- ChatGPT launched on Nov 30, 2022, crossed one million users in 5 days & 100 million users in 60 days.

- OpenAI launched ChatGPT app for iOS.

- Bard launched to the public for FREE with an in-built browsing feature.

- Anthropic's Claude 2 launched to the public. Has a context window of 100K and can take the entirety of "The Great Gatsby" as input.

- Bloomberg launched BloombergGPT and predicted a $1.3 trillion generative AI market.

- Meta launched Voicebox - an all-in-one generative speech model that can translate into 6 languages.

- AI generated image showed an explosion near the Pentagon causing a $500 billion shedding from the S&P index.

- Opera launched a new browser "One" with free ChatGPT integration (similar to Edge-Bing).

- AI named Voyager (MineDojo) played Minecraft & wrote its own code with help from GPT4.

- Runaway released GEN 2, enabling you to create videos from text in seconds.

- AutoGPT launched. It breaks down tasks into sub-tasks and runs in automatic loops.

- GPT Engineer launched. It will generate the entire codebase in a prompt.

- Adobe introduced a generative AI fill feature for Photoshop.

- NVIDIA launched ACE, bringing conversational NPC AI characters to life.

- Microsoft brought AI to Office & Windows with Copilot.

- Forever Voices turned an influencer into a digital girlfriend Caryn AI.

- Brian Sullivan interviews 'FAUX BRIAN' (his AI version) on live TV.

- AI-generated QR codes become a reality.

LLMs

- Meta's LLama model leaked, starting a new race for open-source LLMs.

- GPT4 shocked the world by passing the bar exam at 90%, LSAT at 88%, GRE Quantitative at 80%, and GRE Verbal at 99%.

- Med Palm2 outperforms expert doctors on the MedQA test.

- Open-source models like Vicuna & Falcon40B match with the output quality of ChatGPT3.5 & Bard.

Here are ALL the major developments you NEED to know:

GENERATIVE AI

- ChatGPT launched on Nov 30, 2022, crossed one million users in 5 days & 100 million users in 60 days.

- OpenAI launched ChatGPT app for iOS.

- Bard launched to the public for FREE with an in-built browsing feature.

- Anthropic's Claude 2 launched to the public. Has a context window of 100K and can take the entirety of "The Great Gatsby" as input.

- Bloomberg launched BloombergGPT and predicted a $1.3 trillion generative AI market.

- Meta launched Voicebox - an all-in-one generative speech model that can translate into 6 languages.

- AI generated image showed an explosion near the Pentagon causing a $500 billion shedding from the S&P index.

- Opera launched a new browser "One" with free ChatGPT integration (similar to Edge-Bing).

- AI named Voyager (MineDojo) played Minecraft & wrote its own code with help from GPT4.

- Runaway released GEN 2, enabling you to create videos from text in seconds.

- AutoGPT launched. It breaks down tasks into sub-tasks and runs in automatic loops.

- GPT Engineer launched. It will generate the entire codebase in a prompt.

- Adobe introduced a generative AI fill feature for Photoshop.

- NVIDIA launched ACE, bringing conversational NPC AI characters to life.

- Microsoft brought AI to Office & Windows with Copilot.

- Forever Voices turned an influencer into a digital girlfriend Caryn AI.

- Brian Sullivan interviews 'FAUX BRIAN' (his AI version) on live TV.

- AI-generated QR codes become a reality.

LLMs

- Meta's LLama model leaked, starting a new race for open-source LLMs.

- GPT4 shocked the world by passing the bar exam at 90%, LSAT at 88%, GRE Quantitative at 80%, and GRE Verbal at 99%.

- Med Palm2 outperforms expert doctors on the MedQA test.

- Open-source models like Vicuna & Falcon40B match with the output quality of ChatGPT3.5 & Bard.

China aims to replicate human brain in bid to dominate global AI

The pursuit of the most advanced AI—human-like artificial general intelligence—has prompted concerns among experts about potential dangers if it runs amok.

www.newsweek.com

China Aims To Replicate Human Brain in Bid To Dominate Global AI

BY DIDI KIRSTEN TATLOW / SENIOR REPORTER, INTERNATIONAL AFFAIRS ON 9/19/23 AT 5:00 AM EDTAiming to be first in the world to have the most advanced forms of artificial intelligence while also maintaining control over more than a billion people, elite Chinese scientists and their government have turned to something new, and very old, for inspiration—the human brain.

In one of thousands of efforts underway, they are constructing a "city brain" to enhance the computers at the core of the "smart cities" that already scan the country from Beijing's broad avenues to small-town streets, collecting and processing terabytes of information from intricate networks of sensors, cameras and other devices that monitor traffic, human faces, voices and gait, and even look for "gathering fights."

Equipped with surveillance and visual processing capabilities modelled on human vision, the new "brain" will be more effective, less energy hungry, and will "improve governance," its developers say. "We call it bionic retina computing," Gao Wen, a leading artificial intelligence researcher, wrote in the paper "City Brain: Challenges and Solution."

The work by Gao and his cutting-edge Peng Cheng Laboratory in the southern city of Shenzhen represents far more than just China's drive to expand its ever more pervasive monitoring of its citizens: it is also an indication of China's determination to win the race for what is known as artificial general intelligence.

This is the AI that could not only out-think people on a vast number of tasks and give whoever controls it an enormous strategic advantage, but which has also prompted warnings from experts in the West of a potential threat to the existence of civilization if it outwits its human masters and runs amok.

Gao's is just one of about 1,000 papers seen by Newsweek that show China is forging ahead in the race for artificial general intelligence, which is a step change beyond the large language models such as Chat GPT or Bard already taking societies by storm with their ability to generate text and images and find vast amounts of information quickly.

"Artificial general intelligence is the 'atomic bomb' of the information field and the 'game winner' in the competition between China and the United States," another leading Chinese AI scientist, Zhu Songchun, said in July in his hometown of Ezhou by Wuhan in Hubei province, according to Jingchu Net, an online website of the Hubei Daily, a Communist Party media outlet.

Just as in the 1950s and '60s when Chinese scientists worked around the clock to build the atomic bomb, intercontinental missile and satellite, "We need to develop AI like the 'two bombs and one satellite' and form an AI 'ace army' that represents the national will," Zhu said.

China aims to lead the world in AI by 2030, a goal made clear in the official "China Brain Project" announced in 2016. AI and brain science are also two of half a dozen "frontier fields" named in the state's 15-year national science plan running from 2021 to 2035.

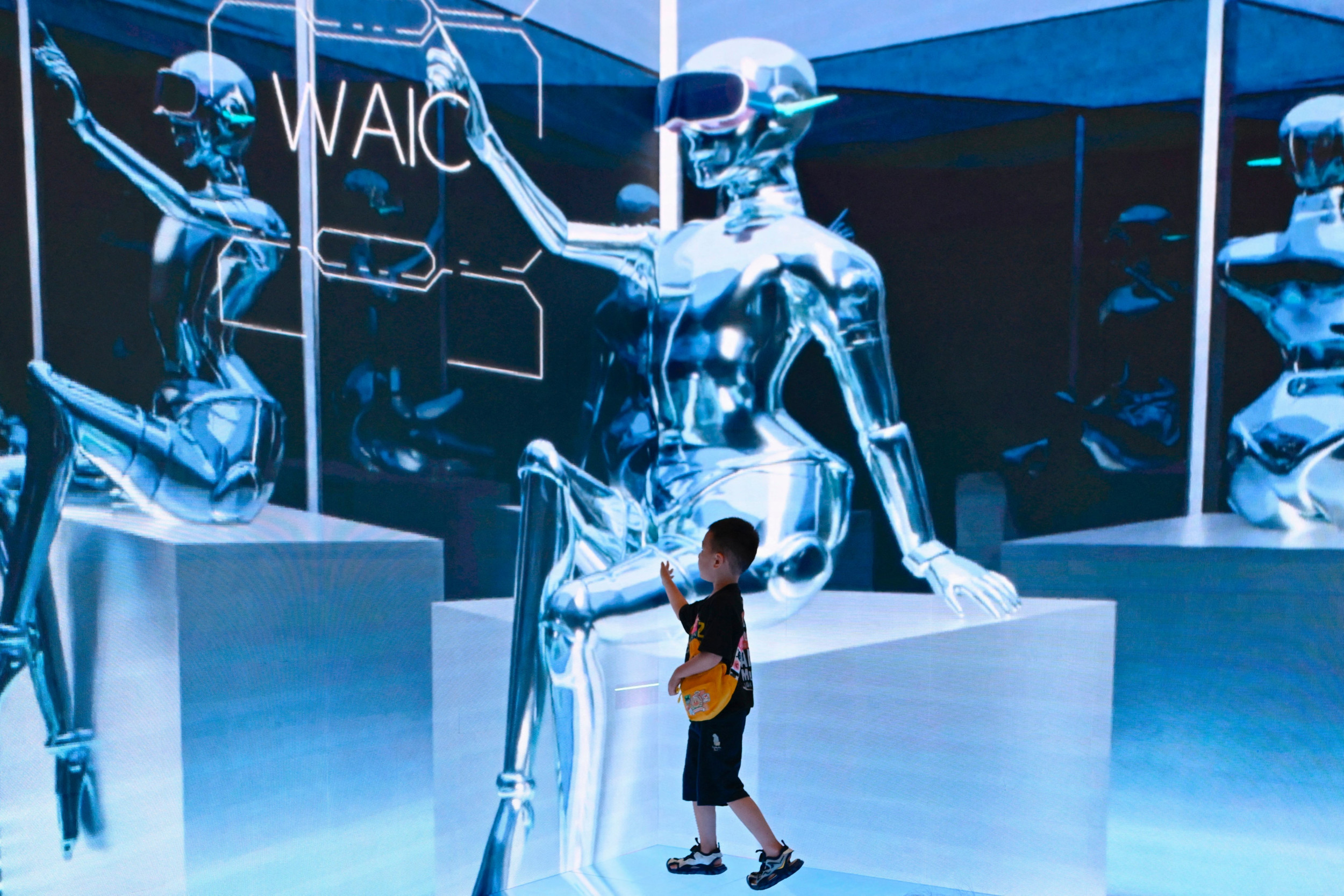

A woman scans her laptop in front of an image of an AI grid over the Great Wall of China during the World Artificial Intelligence Conference (WAIC) in Shanghai in July. A report published in August by a Washington, D.C.-based futurist think tank warns of grave dangers for humanity from artificial general intelligence.PHOTO BY WANG ZHAO/WANG ZHAO/AFP VIA GETTY IMAGES

There's AI—and then there's AGI

There are major differences between the "narrow AI" systems in use now, which cannot "think" for themselves but can perform tasks such as writing a person's term paper or identifying their face, versus the more ambitious, artificial general intelligence that could one day do better than humans at many tasks.U.S. scientists are also working on AGI, though efforts are mostly scattered, unlike in China where research institutes devoted to it have received many hundreds of millions of dollars in state funding, say Western AI scientists who have worked with their Chinese counterparts in cutting-edge fields but who asked not to be identified due to political sensitivities there surrounding the topic.

A report published in August by The Millennium Project, a Washington, D.C.-based futurist think tank that warns of grave dangers for humanity from AGI, AI doyen Geoffrey Hinton said his expectations for when it might be achieved had dropped recently from 50 years to less than 20—and possibly as little as five.

"We might be close to the computers coming up with their own ideas for improving themselves and it could just go fast. We have to think hard about how to control that," Hinton said.

A major difference between the West and China is the public debate over the dangers AI, including very advanced AI, could pose.

"AI labs are recklessly rushing to build more and more powerful systems, with no robust solutions to make them safe," Anthony Aguirre of the U.S.-based Future of Life Institute told Newsweek, referring largely to work in the U.S. More than 33,000 scientists signed a call by the institute in March for a six-month pause on some AI development—though it went unheeded.

Few such existential concerns are expressed in public in China.

In April, Chinese leader Xi Jinping told the Politburo, "Emphasize the importance of artificial general intelligence, create an innovation system (for it)," according to state news agency Xinhua. Xi has frequently called for Chinese scientists to pursue AI at high speed—at least 13 times in recent years. Xi also told the Politburo that scientists should pay attention to risk, yet so far the key AI-related risk cited in China is political, with a new law introduced in August putting first the rule that AI "must adhere to socialist core values."

Li Zheng, a researcher at the China Institute for Contemporary International Relations in Beijing, wrote in July that China was "more concerned about national security and public interest" than the E.U. or the U.S.

"The U.S. and Western countries place more emphasis on anti-bias and anti-discrimination in AI ethics, trying to avoid the interests of ethnic minorities and marginalized groups from being affected by algorithmic discrimination," Li wrote in the Chinese-language edition of Global Times.

"Developing countries such as China emphasize more on the strategic design and regulatory function of the government."

Li's institute belongs to the Ministry of State Security.

China's AGI Research

The extent of China's research into AGI was highlighted in a study by Georgetown's Center for Security and Emerging Technology titled "China's Cognitive AI Research" that concluded China was on the right path and called for greater scrutiny of the Chinese efforts by the U.S."China's cognitive AI research will improve its ability to field robots, make smarter and quicker decisions, accelerate innovation, run influence operations, and perform other high-level functions reliably with greater autonomy and less computational cost, elevating global AI risk and the strategic challenge to other nations," the authors said in the July study.

Examining thousands of Chinese scientific papers on AI published between 2018 and 2022, the team of authors identified 850 that they say show the country is seriously pursuing AGI including via brain science, the goal singled out in China's current Five Year Plan.

The studies include brain science-inspired investigations of vision such as Gao's, perception aiming for cognition, pattern recognition research, investigations of how to mimic human brain neural networks in computers, and efforts that could ultimately lead to human-robot hybrids, for example by placing a large-scale brain simulation on a robot body.

Illustrating that, a report in late 2022 by CCTV, China's state broadcaster, showed a robot manipulating a door handle to open a cupboard as a scientist explained that the "depth camera" affixed to its shoulders can also "recognize a person's physique and analyze their intentions based on this visual information."

Significantly, the Georgetown researchers said, "There was an unusually large number of papers on facial, gait, and emotion recognition" in the Chinese papers, as well as sentiment analysis, "errant" behavior prediction and military applications.

In addition to the papers identified in the study, others seen by Newsweek explored human-robot value alignment, "Amygdala Inspired Affective Computing" (referring to a small part of the brain that processes fear), industrial applications such as "A closed-loop brain-computer interface with augmented reality feedback for industrial human-robot collaboration," and, from July 2023, "BrainCog: A Spiking Neural Network based, Brain-inspired Cognitive Intelligence Engine for Brain-inspired AI and Brain Simulation."

A forthcoming study by the U.S. researchers will focus on China's use of "brain-computer interface" to enhance the cognitive power of healthy humans, meaning essentially, to mold their intelligence and perhaps even, given the political environment in China, their ideology.