You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The A.I Megathread (LLM , GPT , Development)

More options

Who Replied?Introducing Qwen1.5

GITHUB HUGGING FACE MODELSCOPE DEMO DISCORD Introduction In recent months, our focus has been on developing a “good” model while optimizing the developer experience. As we progress towards Qwen1.5, the next iteration in our Qwen series, this update arrives just before the Chinese New Year. With...

qwenlm.github.io

qwenlm.github.io

Introducing Qwen1.5

February 4, 2024 · 14 min · 2835 words · Qwen Team | Translations:GITHUB HUGGING FACE MODELSCOPE DEMO DISCORD

Introduction

In recent months, our focus has been on developing a “good” model while optimizing the developer experience. As we progress towards Qwen1.5, the next iteration in our Qwen series, this update arrives just before the Chinese New Year.

With Qwen1.5, we are open-sourcing base and chat models across six sizes: 0.5B, 1.8B, 4B, 7B, 14B, and 72B. In line with tradition, we’re also providing quantized models, including Int4 and Int8 GPTQ models, as well as AWQ and GGUF quantized models. To enhance the developer experience, we’ve merged Qwen1.5’s code into Hugging Face transformers, making it accessible with

transformers>=4.37.0 without needing trust_remote_code.We’ve collaborated with frameworks like vLLM, SGLang for deployment, AutoAWQ, AutoGPTQ for quantization, Axolotl, LLaMA-Factory for finetuning, and llama.cpp for local LLM inference, all of which now support Qwen1.5. The Qwen1.5 series is available on platforms such as Ollama and LMStudio. Additionally, API services are offered not only on DashScope but also on together.ai, with global accessibility. Visit here to get started, and we recommend trying out Qwen1.5-72B-chat.

This release brings substantial improvements to the alignment of chat models with human preferences and enhanced multilingual capabilities. All models now uniformly support a context length of up to 32768 tokens. There have also been minor improvements in the quality of base language models that may benefit your finetuning endeavors. This step represents a small stride toward our objective of creating a truly “good” model.

Performance

To provide a better understanding of the performance of Qwen1.5, we have conducted a comprehensive evaluation of both base and chat models on different capabilities, including basic capabilities such as language understanding, coding, reasoning, multilingual capabilities, human preference, agent, retrieval-augmented generation (RAG), etc.Basic Capabilities

To assess the basic capabilities of language models, we have conducted evaluations on traditional benchmarks, including MMLU (5-shot), C-Eval, Humaneval, GS8K, BBH, etc.

At every model size, Qwen1.5 demonstrates strong performance across the diverse evaluation benchmarks. In particular, Qwen1.5-72B outperforms Llama2-70B across all benchmarks, showcasing its exceptional capabilities in language understanding, reasoning, and math.

In light of the recent surge in interest for small language models, we have compared Qwen1.5 with sizes smaller than 7 billion parameters, against the most outstanding small-scale models within the community. The results are shown below:

We can confidently assert that Qwen1.5 base models under 7 billion parameters are highly competitive with the leading small-scale models in the community. In the future, we will continue to improve the quality of small models and exploring methods for effectively transferring the advanced capabilities inherent in larger models into the smaller ones.

Aligning with Human Preference

Alignment aims to enhance instruction-following capabilities of LLMs and help provide responses that are closely aligned with human preferences. Recognizing the significance of integrating human preferences into the learning process, we effectively employed techniques such as Direct Policy Optimization (DPO) and Proximal Policy Optimization (PPO) in aligning the latest Qwen series.However, assessing the quality of such chat models poses a significant challenge. Admittedly, while comprehensive human evaluation is the optimal approach, it faces significant challenges pertaining to scalability and reproducibility. Therefore, we initially evaluate our models on two widely-used benchmarks, utilizing advanced LLMs as judges: MT-Bench and Alpaca-Eval. The results are presented below:

We notice there are non-negligible variance in the scores on MT-Bench. So we have three runs with different seeds in our results and we report the average score with standard deviation.

Despite still significantly trailing behind GPT-4-Turbo, the largest open-source Qwen1.5 model, Qwen1.5-72B-Chat, exhibits superior performance, surpassing Claude-2.1, GPT-3.5-Turbo-0613, Mixtral-8x7b-instruct, and TULU 2 DPO 70B, being on par with Mistral Medium, on both MT-Bench and Alpaca-Eval v2.

Furthermore, although the scoring of LLM Judges may seemingly correlate with the lengths of responses, our observations indicate that our models do not generate lengthy responses to manipulate the bias of LLM judges. The average length of Qwen1.5-Chat on AlpacaEval 2.0 is only 1618, which aligns with the length of GPT-4 and is shorter than that of GPT-4-Turbo. Additionally, our experiments with our web service and app also reveal that users prefer the majority of responses from the new chat models.

Multilingual Understanding of Base Models

We have carefully selected a diverse set of 12 languages from Europe, East Asia, and Southeast Asia to thoroughly evaluate the multilingual capabilities of our foundational model. In order to accomplish this, we have curated test sets from the community’s open-source repositories, covering four distinct dimensions: Exams, Understanding, Translation, and Math. The table below provides detailed information about each test set, including evaluation settings, metrics, and the languages they encompass:

The base models of Qwen1.5 showcase impressive multilingual capabilities, as demonstrated by its performance across a diverse set of 12 languages. In evaluations covering various dimensions such as exams, understanding, translation, and math, Qwen1.5 consistently delivers strong results. From languages like Arabic, Spanish, and French to Japanese, Korean, and Thai, Qwen1.5 demonstrates its ability to comprehend and generate high-quality content across different linguistic contexts. To take a step further, we evaluate the multilingual capabilities of chat models in a number of languages by calculating the win-tie rate against GPT-4. Results are shown below:

These results demonstrate the strong multilingual capabilities of Qwen1.5 chat models, which can serve downstream applications, such as translation, language understanding, and multilingual chat. Also, we believe that the improvements in multilingual capabilities can also level up the general capabilities.

Support of Long Context

With the increasing demand for long-context understanding, we have expanded the capability of all models to support contexts up to 32K tokens. We have evaluated the performance of Qwen1.5 models on the L-Eval benchmark, which measures the ability of models to generate responses based on long context. The results are shown below:

In terms of the performance, even a small model like Qwen1.5-7B-Chat demonstrates competitive performance against GPT-3.5 on 4 out of 5 tasks. Our best model, Qwen1.5-72B-Chat, significantly outperforms GPT3.5-turbo-16k and only slightly falls behind GPT4-32k. These results highlight our outstanding performance within 32K tokens, yet they do not imply that our models are limited to supporting only 32K tokens. You can modify

max_position_embedding in config.json to a larger value to see if the model performance is still satisfactory for your tasks.Capabilities to Connect with External Systems

Large language models (LLMs) are popular in part due to their ability to integrate external knowledge and tools. Retrieval-Augmented Generation (RAG) has gained traction as it mitigates common LLM issues like hallucination, real-time data shortage, and private information handling. Additionally, strong LLMs typically excel at using APIs and tools via function calling, making them ideal for serving as AI agents.We first assess the performance of Qwen1.5-Chat on RGB, an RAG benchmark for which we have not performed any specific optimization:

GitHub - QwenLM/Qwen1.5: Qwen1.5 is the improved version of Qwen, the large language model series developed by Qwen team, Alibaba Cloud.

Qwen1.5 is the improved version of Qwen, the large language model series developed by Qwen team, Alibaba Cloud. - GitHub - QwenLM/Qwen1.5: Qwen1.5 is the improved version of Qwen, the large languag...

About

Qwen1.5 is the improved version of Qwen, the large language model series developed by Qwen team, Alibaba Cloud.

ShinojiResearch/Senku-70B-Full · Hugging Face

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

huggingface.co

Page Not Found | Windsurf

Windsurf is the world's most advanced AI coding assistant for developers and enterprises. Windsurf Editor — the first AI-native IDE that keeps developers in flow.

Codeium-powered code editor

BRIA RMBG 1.4 - a Hugging Face Space by briaai

Upload an image and the tool will cut out the subject, giving you a picture with a transparent background. You receive the original foreground preserved, ready to use in designs, posts, or any proj...

huggingface.co

briaai/RMBG-1.4 · Hugging Face

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

huggingface.co

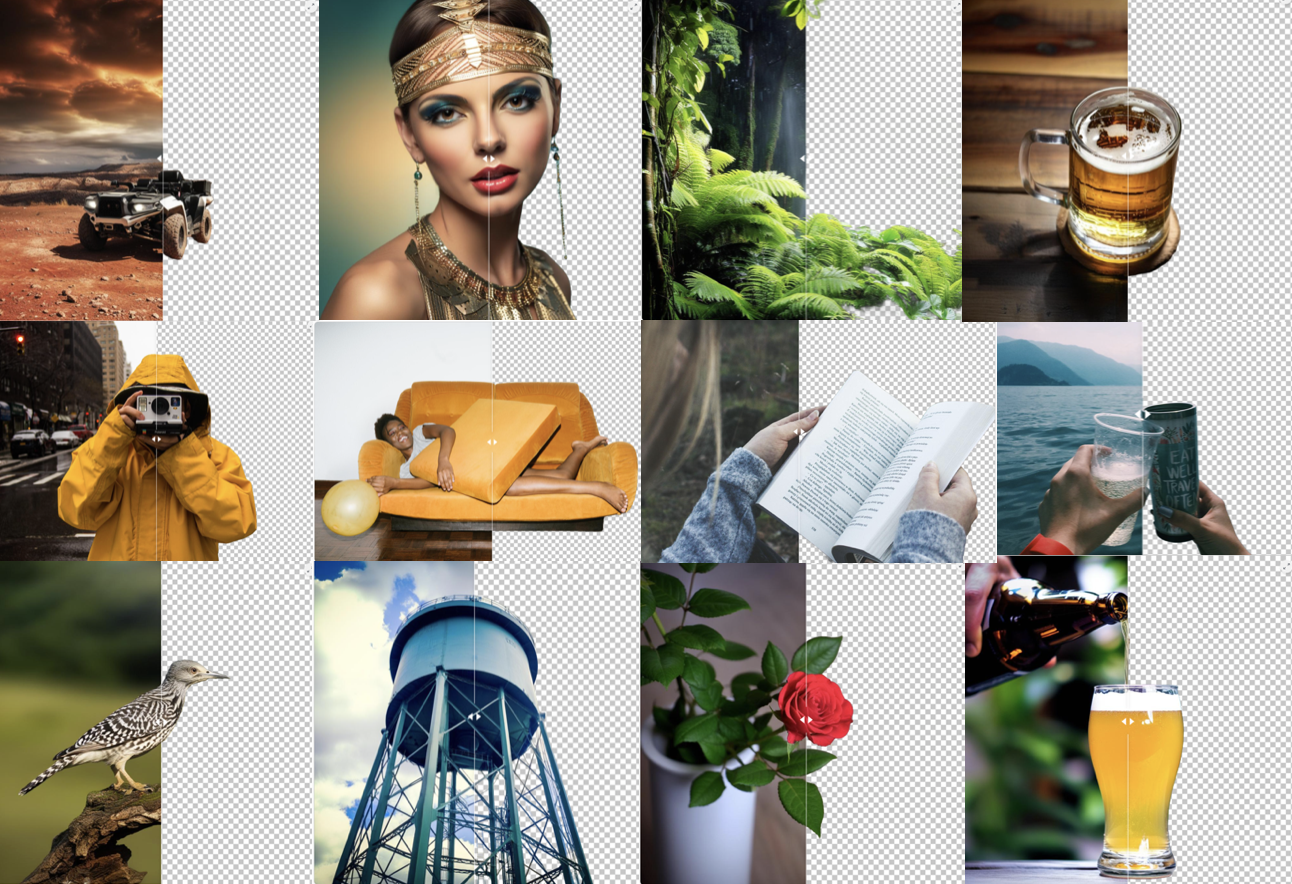

BRIA Background Removal v1.4 Model Card

RMBG v1.4 is our state-of-the-art background removal model, designed to effectively separate foreground from background in a range of categories and image types. This model has been trained on a carefully selected dataset, which includes: general stock images, e-commerce, gaming, and advertising content, making it suitable for commercial use cases powering enterprise content creation at scale. The accuracy, efficiency, and versatility currently rival leading open source models. It is ideal where content safety, legally licensed datasets, and bias mitigation are paramount.Developed by BRIA AI, RMBG v1.4 is available as an open-source model for non-commercial use.

CLICK HERE FOR A DEMO

Model Description

- Developed by: BRIA AI

- Model type: Background Removal

- License: bria-rmbg-1.4

- The model is released under an open-source license for non-commercial use.

- Commercial use is subject to a commercial agreement with BRIA. Contact Us for more information.

- Model Description: BRIA RMBG 1.4 is a saliency segmentation model trained exclusively on a professional-grade dataset.

- BRIA: Resources for more information: BRIA AI

Last edited:

Computer Science > Computation and Language

[Submitted on 11 Dec 2023 (v1), last revised 3 Jan 2024 (this version, v2)]EQ-Bench: An Emotional Intelligence Benchmark for Large Language Models

Samuel J. PaechWe introduce EQ-Bench, a novel benchmark designed to evaluate aspects of emotional intelligence in Large Language Models (LLMs). We assess the ability of LLMs to understand complex emotions and social interactions by asking them to predict the intensity of emotional states of characters in a dialogue. The benchmark is able to discriminate effectively between a wide range of models. We find that EQ-Bench correlates strongly with comprehensive multi-domain benchmarks like MMLU (Hendrycks et al., 2020) (r=0.97), indicating that we may be capturing similar aspects of broad intelligence. Our benchmark produces highly repeatable results using a set of 60 English-language questions. We also provide open-source code for an automated benchmarking pipeline at this https URL and a leaderboard at this https URL

| Subjects: | Computation and Language (cs.CL); Artificial Intelligence (cs.AI) |

| ACM classes: | I.2.7 |

| Cite as: | arXiv:2312.06281 [cs.CL] |

| (or arXiv:2312.06281v2 [cs.CL] for this version) | |

| [2312.06281] EQ-Bench: An Emotional Intelligence Benchmark for Large Language Models Focus to learn more |

Submission history

From: Samuel Paech [view email][v1] Mon, 11 Dec 2023 10:35:32 UTC (405 KB)

[v2] Wed, 3 Jan 2024 12:20:35 UTC (405 KB)

Last edited:

3 rounds of self-improvement seem to be a saturation limit for LLMs. I haven't yet seen a compelling demo of LLM self-bootstrapping that is nearly as good as AlphaZero, which masters Go, Chess, and Shogi from scratch by nothing but self-play.

Reading "Self-Rewarding Language Models" from Meta (arxiv.org/abs/2401.10020). It's a very simple idea that iteratively bootstraps a single LLM that proposes prompt, generates response, and rewards itself. The paper says that reward modeling ability, which is no longer a fixed & separate model, improves along with the main model. Yet it still saturates after 3 iterations, which is the maximum shown in the experiments.

Thoughts:

- Saturation happens because the rate of improvement for reward modeling (critic) is slower than that for generation (actor). At the beginning, there is always a gap to be exploited because classification is inherently easer than generation. But actor eventually catches up with critic in just 3 rounds, even though both are improving.

- In another paper, "Reinforced Self-Training (ReST) for Language Modeling" (arxiv.org/abs/2308.08998), the iteration number is also 3 before hitting diminishing returns.

- I don't think the gap between critic and actor is sustainable unless there is an external driving signal, such as symbolic theorem verification, unit test suites, or compiler feedbacks. But these are highly specialized to a particular domain and not enough for the general-purpose self-improvement we dream of.

Lots of research ideas to be explored here.

mlx-community/phi-2-dpo-7k · Hugging Face

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

huggingface.co

Image to Music v2 - a Hugging Face Space by fffiloni

Get a music sample inspired by the mood of an image

huggingface.co