You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

The A.I Megathread (LLM , GPT , Development)

More options

Who Replied?Magic Mulatto

Here Today, Gone Tomorrow. . .

It’s over, breh…

GitHub - google-research-datasets/presto: A Multilingual Dataset for Parsing Realistic Task-Oriented Dialogs

A Multilingual Dataset for Parsing Realistic Task-Oriented Dialogs - GitHub - google-research-datasets/presto: A Multilingual Dataset for Parsing Realistic Task-Oriented Dialogs

PRESTO – A multilingual dataset for parsing realistic task-oriented dialogues

Posted by Rahul Goel and Aditya Gupta, Software Engineers, Google Assistant Virtual assistants are increasingly integrated into our daily routines....

ai.googleblog.com

ai.googleblog.com

Last edited:

Pause Giant AI Experiments: An Open Letter - Future of Life Institute

We call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4.

Pause Giant AI Experiments: An Open Letter

We call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4.Signatures

1123

AI systems with human-competitive intelligence can pose profound risks to society and humanity, as shown by extensive research[1] and acknowledged by top AI labs.[2] As stated in the widely-endorsed Asilomar AI Principles, Advanced AI could represent a profound change in the history of life on Earth, and should be planned for and managed with commensurate care and resources. Unfortunately, this level of planning and management is not happening, even though recent months have seen AI labs locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one – not even their creators – can understand, predict, or reliably control.

Contemporary AI systems are now becoming human-competitive at general tasks,[3] and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? Such decisions must not be delegated to unelected tech leaders. Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable. This confidence must be well justified and increase with the magnitude of a system's potential effects. OpenAI's recent statement regarding artificial general intelligence, states that "At some point, it may be important to get independent review before starting to train future systems, and for the most advanced efforts to agree to limit the rate of growth of compute used for creating new models." We agree. That point is now.

Therefore, we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4. This pause should be public and verifiable, and include all key actors. If such a pause cannot be enacted quickly, governments should step in and institute a moratorium.

AI labs and independent experts should use this pause to jointly develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts. These protocols should ensure that systems adhering to them are safe beyond a reasonable doubt.[4] This does not mean a pause on AI development in general, merely a stepping back from the dangerous race to ever-larger unpredictable black-box models with emergent capabilities.

AI research and development should be refocused on making today's powerful, state-of-the-art systems more accurate, safe, interpretable, transparent, robust, aligned, trustworthy, and loyal.

In parallel, AI developers must work with policymakers to dramatically accelerate development of robust AI governance systems. These should at a minimum include: new and capable regulatory authorities dedicated to AI; oversight and tracking of highly capable AI systems and large pools of computational capability; provenance and watermarking systems to help distinguish real from synthetic and to track model leaks; a robust auditing and certification ecosystem; liability for AI-caused harm; robust public funding for technical AI safety research; and well-resourced institutions for coping with the dramatic economic and political disruptions (especially to democracy) that AI will cause.

Humanity can enjoy a flourishing future with AI. Having succeeded in creating powerful AI systems, we can now enjoy an "AI summer" in which we reap the rewards, engineer these systems for the clear benefit of all, and give society a chance to adapt. Society has hit pause on other technologies with potentially catastrophic effects on society.[5] We can do so here. Let's enjoy a long AI summer, not rush unprepared into a fall.

skyrunner1

Superstar

Pause Giant AI Experiments: An Open Letter - Future of Life Institute

We call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4.futureoflife.org

Pause Giant AI Experiments: An Open Letter

We call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4.

Signatures

1123

AI systems with human-competitive intelligence can pose profound risks to society and humanity, as shown by extensive research[1] and acknowledged by top AI labs.[2] As stated in the widely-endorsed Asilomar AI Principles, Advanced AI could represent a profound change in the history of life on Earth, and should be planned for and managed with commensurate care and resources. Unfortunately, this level of planning and management is not happening, even though recent months have seen AI labs locked in an out-of-control race to develop and deploy ever more powerful digital minds that no one – not even their creators – can understand, predict, or reliably control.

Contemporary AI systems are now becoming human-competitive at general tasks,[3] and we must ask ourselves: Should we let machines flood our information channels with propaganda and untruth? Should we automate away all the jobs, including the fulfilling ones? Should we develop nonhuman minds that might eventually outnumber, outsmart, obsolete and replace us? Should we risk loss of control of our civilization? Such decisions must not be delegated to unelected tech leaders. Powerful AI systems should be developed only once we are confident that their effects will be positive and their risks will be manageable. This confidence must be well justified and increase with the magnitude of a system's potential effects. OpenAI's recent statement regarding artificial general intelligence, states that "At some point, it may be important to get independent review before starting to train future systems, and for the most advanced efforts to agree to limit the rate of growth of compute used for creating new models." We agree. That point is now.

Therefore, we call on all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4. This pause should be public and verifiable, and include all key actors. If such a pause cannot be enacted quickly, governments should step in and institute a moratorium.

AI labs and independent experts should use this pause to jointly develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts. These protocols should ensure that systems adhering to them are safe beyond a reasonable doubt.[4] This does not mean a pause on AI development in general, merely a stepping back from the dangerous race to ever-larger unpredictable black-box models with emergent capabilities.

AI research and development should be refocused on making today's powerful, state-of-the-art systems more accurate, safe, interpretable, transparent, robust, aligned, trustworthy, and loyal.

In parallel, AI developers must work with policymakers to dramatically accelerate development of robust AI governance systems. These should at a minimum include: new and capable regulatory authorities dedicated to AI; oversight and tracking of highly capable AI systems and large pools of computational capability; provenance and watermarking systems to help distinguish real from synthetic and to track model leaks; a robust auditing and certification ecosystem; liability for AI-caused harm; robust public funding for technical AI safety research; and well-resourced institutions for coping with the dramatic economic and political disruptions (especially to democracy) that AI will cause.

Humanity can enjoy a flourishing future with AI. Having succeeded in creating powerful AI systems, we can now enjoy an "AI summer" in which we reap the rewards, engineer these systems for the clear benefit of all, and give society a chance to adapt. Society has hit pause on other technologies with potentially catastrophic effects on society.[5] We can do so here. Let's enjoy a long AI summer, not rush unprepared into a fall.

This is when AI takes over and says they gonna take over from here and mash the gas.. I seen this movie a couple times

AI Expert Explains How ChatGPT Really Works -- and Why It's Designed to Produce "the Most Mediocre Web Content You Can Imagine"

Hilary Mason, formerly Chief Scientist for Bitly and GM of Machine Learning at Cloudera, recently wrote this fun and incredibly effective explanation for ChatGPT that I highly recommend sharing with, well, everyone: How does ChatGPT work, really? It can feel...

nwn.blogs.com

WEDNESDAY, MARCH 08, 2023

AI Expert Explains How ChatGPT Really Works -- And Why It's Designed To Produce "The Most Mediocre Web Content You Can Imagine"

Hilary Mason, formerly Chief Scientist for Bitly and GM of Machine Learning at Cloudera, recently wrote this fun and incredibly effective explanation for ChatGPT that I highly recommend sharing with, well, everyone:

How does ChatGPT work, really?

It can feel like a wizard behind a curtain, or like a drunk friend, but ChatGPT is just… math.

Let’s talk about how to think about Large Language Models (LLMs) like ChatGPT so you can understand what to expect from them and better imagine what you might actually use them for.

A language model is a compressed representation of the patterns in language, trained on all of the language on the internet, and some books.

That’s it.

The model is trained by taking a very large data set — in this case, text from sites like Reddit (including any problematic content) and Wikipedia, and books from Project Gutenberg — extracting tokens, which are essentially the words in the sentences, and then computing the relationships in the patterns of how those words are used. That representation can then be used to generate patterns that look like the patterns it's seen before.

If I sing the wordsHaaappy biiiirthdayyy to ____

, most English speakers know that the next word is likely to be “you.” It might be other words like “me,” or “them,” but it’s very unlikely to be words like “allocative” or “pudding.” (No shade to pudding. I wish pudding the happiest of birthdays.)

You weren’t born knowing the birthday song; you know the next likely words in the song because you’ve heard it sung lots of times. That’s basically what’s happening under the hood with a language model.

This is why a language model can generate coherent sentences without understanding the things it writes about. It's why it hallucinates (another technical term!) plausible-sounding information but doesn't have any idea what's factual. Inevitably, “it’s gonna lie.” The model understands syntax, not semantics.

When you ask ChatGPT a question, you get a response that fits the patterns of the probability distribution of language that the model has seen before. It does not reflect knowledge, facts, or insights.

And to make this even more fun, in that compression of language patterns, we also magnify the bias in the underlying language. This is how we end up with models that are even more problematic than what you find on the web. ChatGPT specifically does use human-corrected judgements to reduce the worst of this behavior, but those efforts are far from foolproof, and the model still reflects the biases of the humans doing the correcting.

Finally, because of the way these models are designed, they are at best, a representation of the average language used on the internet. By design, ChatGPT aspires to be the most mediocre web content you can imagine.

With all of that said, these language models are tremendously useful. They can take minimal inputs and make coherent text for us! They can help us draft, or translate, or change our writing style, or trigger new ideas. And they’ll be completelytransformative

.

Emphasis mine, because good god, it needs emphasizing now. I keep reading stories about ChatGPT passing a medical license exam, or being a person's therapist, or literally terrifying a New York Times reporter, when there's really nothing all that dramatic actually going on behind the scenes.

The problem, as I put it to Hilary, seems to be one of perception. OpenAI the organization is not really explaining what ChatGPT does, so when it generates impressive answers, people really believe it's super smart and even sentient.

"ChatGPT is fundamentally a UX update to GPT3, allowing iterative querying of the model, creating a much better experience," Hilary observes. "But it's not a fundamental change in tech!"

"As to why OpenAI hasn't tried to damp down the hype, I think ChatGPT has served as a tremendous marketing tool for them. Having it free (when the GPUs are definitely not free to operate), and with such an easy-to-use UX, has unleashed a tremendous amount of energy. Why would they do anything to lessen that?

"OpenAI is a startup, too. And ChatGPT isn't a product. My intuition is that they would tolerate some experimental behavior in order to discover what people will ultimately be willing to pay for?"

So as I understand it, the reason people put so many inflated expectations around ChatGPT is very much due to marketing and presentation -- not because of the technology itself. And you know what happens on the Gartner Hype Cycle after inflated expectations get, well, deflated. At some point soon, people will begin to realize it's basically a better version of Google Search (in certain contexts, at least), and recalibrate.

As for Hilary, her latest startup also uses AI, but in a very different way. And that's a story for another post.

Excerpt reprinted with permission from Hilary Mason's LinkedIn.

How does a dirty delaware nikka like myself get in on this early (I know I’m super late to most) .. just give me the lowest entry I can start at to get on this wave. Please.

How does a dirty delaware nikka like myself get in on this early (I know I’m super late to most) .. just give me the lowest entry I can start at to get on this wave. Please.

GEPPETTO AI

GEPPETTO AI will drive the most powerful CINEMA in history, the first-ever infinite GAMES and be your partner in IMAGINATION.

check out BlenderGPT

Magic3D: High-Resolution Text-to-3D Content Creation

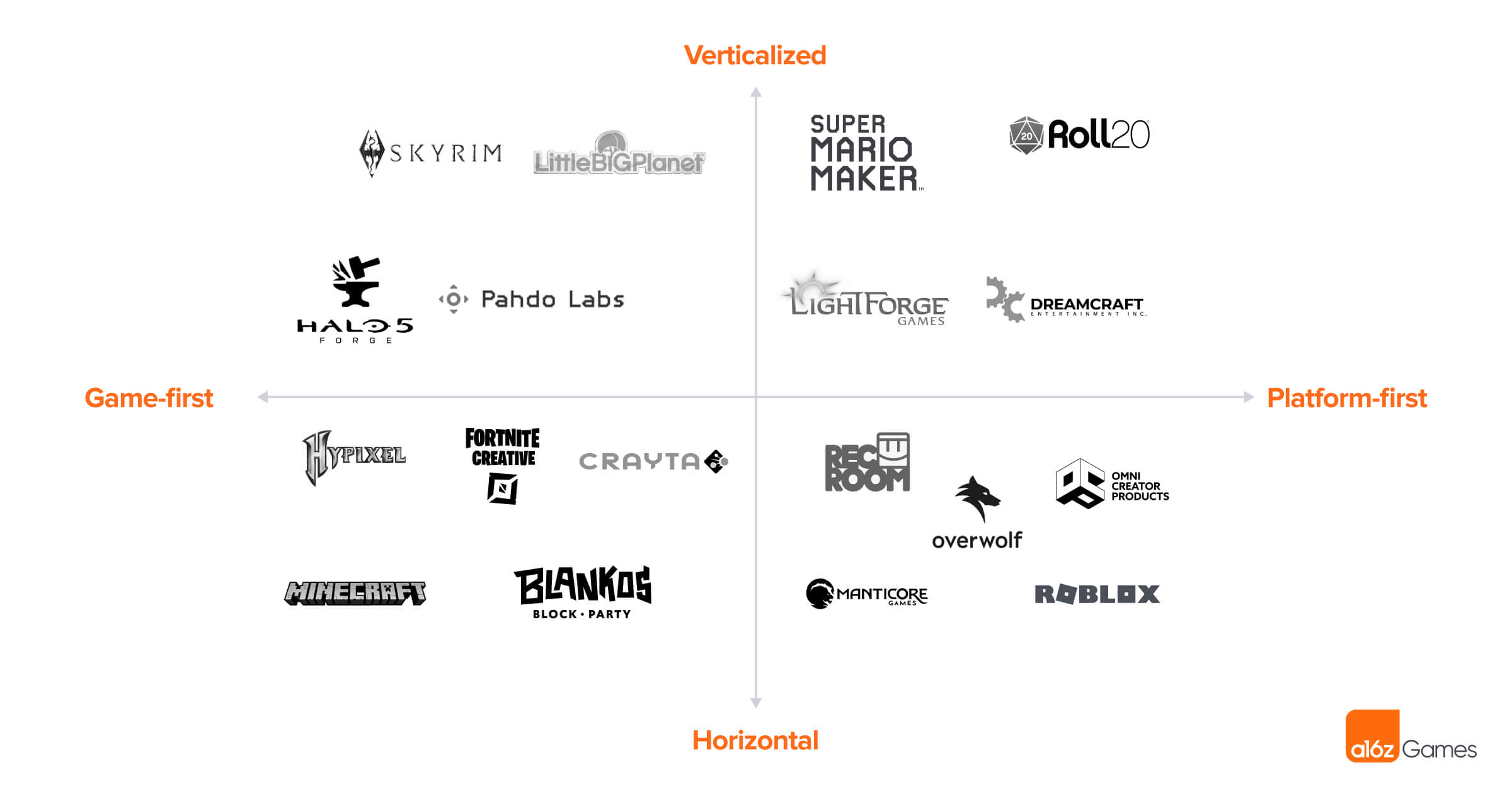

The Generative AI Revolution in Games | Andreessen Horowitz

To understand how radically gaming is about to be transformed by generative AI, look no further than this recent Twitter post by @emmanuel_2m. In this post he explores using Stable Diffusion + Dreambooth, popular 2D generative AI models, to generate images of potions for a hypothetical game...

The Generative AI Revolution will Enable Anyone to Create Games | Andreessen Horowitz

Generative AI will completely reshape UGC and expand the games market beyond what many thought was possible.

Luma | AI Agents for Creative Work

Your creative team becomes prolific with Luma Agents

Make-It-3D: High-Fidelity 3D Creation from A Single Image with Diffusion Prior

Make-It-3D: High-Fidelity 3D Creation from A Single Image with Diffusion Prior

make-it-3d.github.io

Make-It-3D can create high-fidelity 3D content from only a single image.