Google DeepMind boss hits back at Meta AI chief over ‘fearmongering’ claim

Demis Hassabis said that Google DeepMind wasn't trying to achieve "regulatory capture" when it came to the discussion on how best to approach AI.

Google DeepMind boss hits back at Meta AI chief over ‘fearmongering’ claim

PUBLISHED TUE, OCT 31 202312:04 PM EDTUPDATED AN HOUR AGORyan Browne

@RYAN_BROWNE_

KEY POINTS

- Google DeepMind boss Demis Hassabis told CNBC that the company wasn’t trying to achieve “regulatory capture” when it came to the discussion on how best to approach AI.

- Yann LeCun, Meta’s chief AI scientist, said that DeepMind’s Hassabis, along with other AI CEOs, were “doing massive corporate lobbying” to ensure only a handful of big tech companies end up controlling AI.

- Hassabis said that it was important to start a conversation about regulating potentially superintelligent artificial intelligence now rather than later because, if left too long, the consequences could be grim.

WATCH NOW

VIDEO14:33

We have to talk to everyone, including China, to understand the potential of AI technology, Google DeepMind CEO says

The boss of Google DeepMind pushed back on a claim from Meta’s artificial intelligence chief alleging the company is pushing worries about AI’s existential threats to humanity to control the narrative on how best to regulate the technology.

In an interview with CNBC’s Arjun Kharpal, Hassabis said that DeepMind wasn’t trying to achieve “regulatory capture” when it came to the discussion on how best to approach AI. It comes as DeepMind is closely informing the U.K. government on its approach to AI ahead of a pivotal summit on the technology due to take place on Wednesday and Thursday.

Over the weekend, Yann LeCun, Meta’s chief AI scientist, said that DeepMind’s Hassabis, along with OpenAI CEO Sam Altman, Anthropic CEO Dario Amodei were “doing massive corporate lobbying” to ensure only a handful of big tech companies end up controlling AI.

He also said they were giving fuel to critics who say that highly advanced AI systems should be banned to avoid a situation where humanity loses control of the technology.

“If your fearmongering campaigns succeed, they will *inevitably* result in what you and I would identify as a catastrophe: a small number of companies will control AI,” LeCun said on X, the platform formerly known as Twitter, on Sunday.

“Like many, I very much support open AI platforms because I believe in a combination of forces: people’s creativity, democracy, market forces, and product regulations. I also know that producing AI systems that are safe and under our control is possible. I’ve made concrete proposals to that effect.”

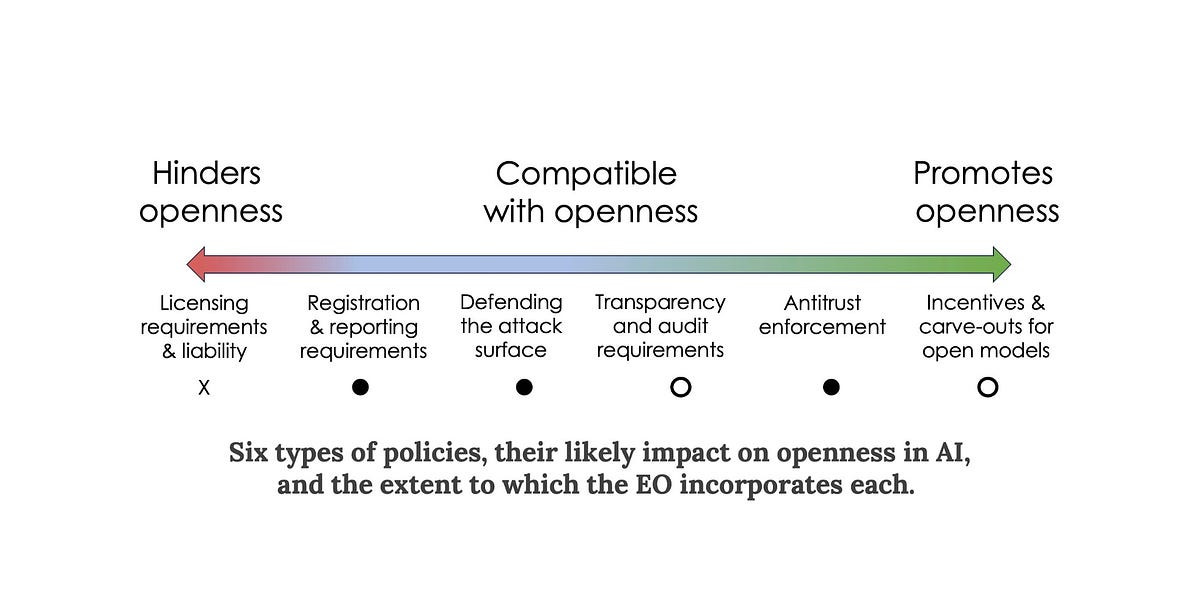

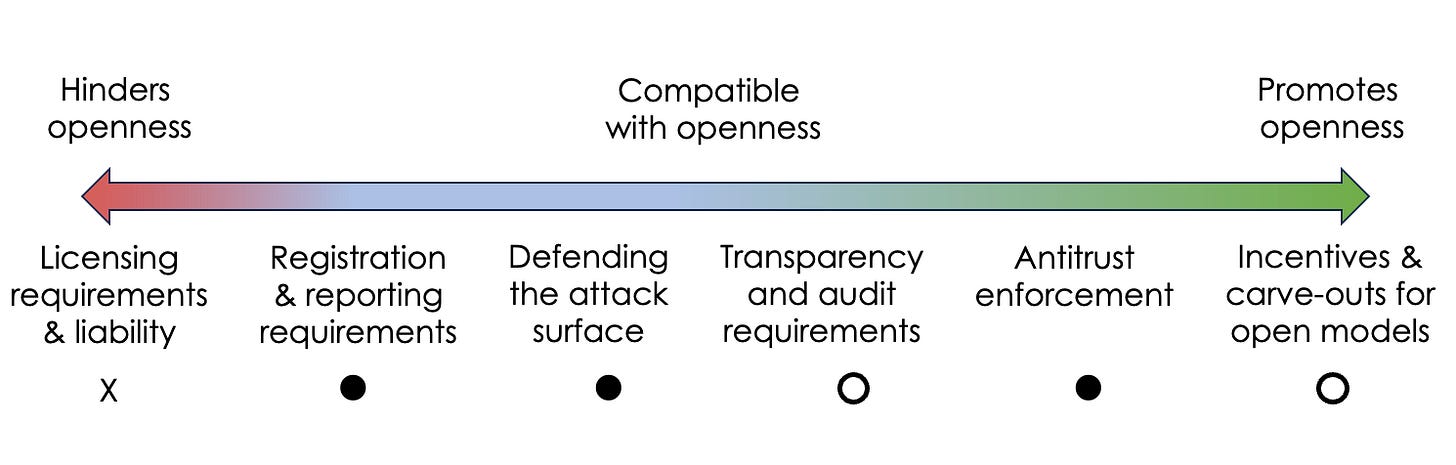

LeCun is a big proponent of open-source AI, or AI software that is openly available to the public for research and development purposes. This is opposed to “closed” AI systems, the source code of which is kept a secret by the companies producing it.

LeCun said that the vision of AI regulation Hassabis and other AI CEOs are aiming for would see open-source AI “regulated out of existence” and allow only a small number of companies from the West Coast of the U.S. and China control the technology.

Meta is one of the largest technology companies working to open-source its AI models. The company’s LLaMa large language model (LLM) software is one of the biggest open-source AI models out there, and has advanced language translation features built in.

In response to LeCun’s comments, Hassabis said Tuesday: “I pretty much disagree with most of those comments from Yann.”

“I think the way we think about it is there’s probably three buckets or risks that we need to worry about,” said Hassabis. “There’s sort of near term harms things like misinformation, deepfakes, these kinds of things, bias and fairness in the systems, that we need to deal with.”

“Then there’s sort of the misuse of AI by bad actors repurposing technology, general-purpose technology for bad ends that they were not intended for. That’s a question about proliferation of these systems and access to these systems. So we have to think about that.”

“And then finally, I think about the more longer-term risk, which is technical AGI [artificial general intelligence] risk,” Hassabis said.

“So the risk of themselves making sure they’re controllable, what value do you want to put into them have these goals and make sure that they stick to them?”

Hassabis is a big proponent of the idea that we will eventually achieve a form of artificial intelligence powerful enough to surpass humans in all tasks imaginable, something that’s referred to in the AI world as “artificial general intelligence.”

Hassabis said that it was important to start a conversation about regulating potentially superintelligent artificial intelligence now rather than later, because if it is left too long, the consequences could be grim.

“I don’t think we want to be doing this on the eve of some of these dangerous things happening,” Hassabis said. “I think we want to get ahead of that.”

Meta was not immediately available for comment when contacted by CNBC.

Cooperation with China

Both Hassabis and James Manyika, Google’s senior vice president of research, technology and society, said that they wanted to achieve international agreement on how best to approach the responsible development and regulation of artificial intelligence.Manyika said he thinks it’s a “good thing” that the U.K. government, along with the U.S. administration, agree there is a need to reach global consensus on AI.

“I also think that it’s going to be quite important to include everybody in that conversation,” Manyika added.

“I think part of what you’ll hear often is we want to be part of this, because this is such an important technology, with so much potential to transform society and improve lives everywhere.”

One point of contention surrounding the U.K. AI summit has been the attendance of China. A delegation from the Chinese Ministry of Science and Technology is due to attend the event this week.

That has stirred feelings of unease among some corners of the political world, both in the U.S. government and some of Prime Minister Rishi Sunak’s own ranks.

These officials are worried that China’s involvement in the summit could pose certain risks to national security, particularly as Beijing has a strong influence over its technology sector.

Asked whether China should be involved in the conversation surrounding artificial intelligence safety, Hassabis said that AI knows no borders, and that it required coordination from actors in multiple countries to get to a level of international agreement on the standards required for AI .

“This technology is a global technology,” Hassabis said. “It’s really important, at least on a scientific level, that we have as much dialogue as possible.”

Asked whether DeepMind was open as a company to working with China, Hassabis responded: “I think we have to talk to everyone at this stage.”

U.S. technology giants have shied away from doing commercial work in China, particularly as Washington has applied huge pressure on the country on the technology front.

/cloudfront-us-east-2.images.arcpublishing.com/reuters/H5SL4WYSRZNLBITBH2VZELUPM4.jpg)