One of the benchmarks was beating the ARC AGI prize. Here's the definition:Was this model specifically trained for this test ? Because if so that would mean it's good at that one but bad in all the others isnt it ?

It's not just any benchmark - it was specifically designed to avoiding 'training for the test from the sound of things. It tests if AI can truly learn and adapt to new situations rather than just apply pre-learned patterns... it's testing for 'learning on the fly' if you will. The benchmark was created by Francois Chollet, who Time Magazine named in the top 100 AI people earlier this year. Here's a snippet of why the ARC AGI Prize is (was?) important:The purpose of ARC Prize is to redirect more AI research focus toward architectures that might lead toward artificial general intelligence (AGI) and ensure that notable breakthroughs do not remain a trade secret at a big corporate AI lab.

ARC-AGI is the only AI benchmark that tests for general intelligence by testing not just for skill, but for skill acquisition.

François Chollet, the 34-year-old Google software engineer and creator of deep-learning application programming interface (API) Keras, is challenging the AI status quo. While tech giants bet on achieving more advanced AIs by feeding ever more data and computational resources to large language models (LLMs), Chollet argues this approach alone won't achieve artificial general intelligence (AGI)

His $1.1 million ARC Prize, launched in June 2024 with Mike Knoop, Lab42 and Infinite Monkey, dares researchers to solve spatial reasoning problems that confound current systems but are comparatively simple for humans. The competition's results seem to be proving Chollet right. Though the top of the leaderboard is still far below the human average of 84%, top models are steadily improving—from 21% in 2020 to 43% accuracy.

TIME100 AI 2024: Francois Chollet

Find out why Francois Chollet made TIME’s list of the most influential people in artificial intelligence

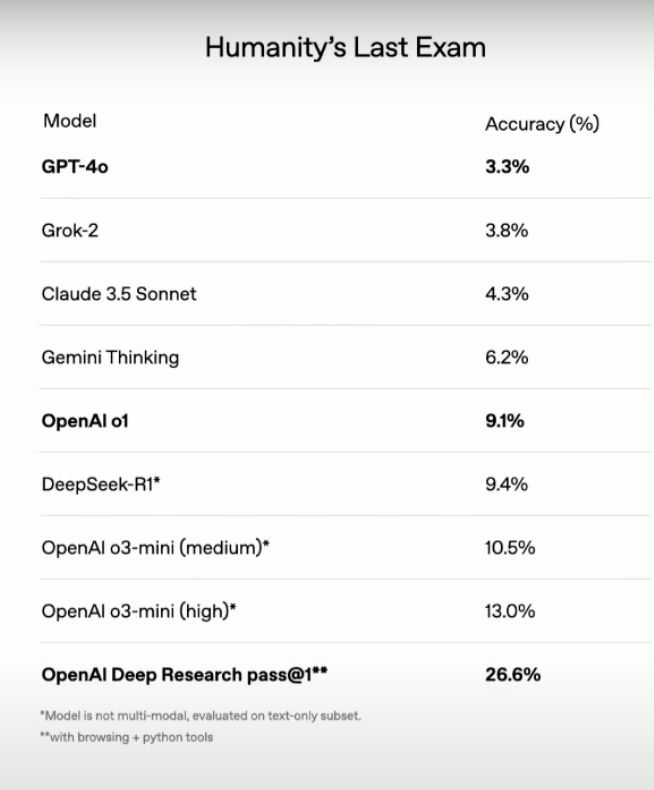

This writeup was written in September. Two months later, OpenAI has beaten the benchmark and it changes everything.

The breakthrough isn't just about the high score - it's about HOW o3 achieved it. Instead of just scaling up existing approaches (bigger models, more training data), o3 demonstrates a fundamentally different capability: it can actively search for and construct solutions to problems it's never seen before.

While it is prohibitively expensive (it cost ~$350k to get that score), OpenAI's latest model was able to solve ARC-AGI better than the average human can.

My understanding is that there's a realization that we have done the hard part of figuring out a way to scale to AGI, and now we just need hardware capabilities so that scaling isn't extremely expensive. Reaching AGI levels (for all intents and purposes) is now possible but expensive, but Moore's Law suggests that this type of hardware bottleneck will take care of itself 'naturally', and so making AGI accessible is now just about making costs more digestible over time in the same way that a TB of data costs $87 billion in 1956, but now can be had for $15 used.

Last edited: