New York Times sues Microsoft, ChatGPT maker OpenAI over copyright infringement

The New York Times on Wednesday filed a lawsuit against Microsoft and OpenAI, the company behind popular AI chatbot ChatGPT.

New York Times sues Microsoft, ChatGPT maker OpenAI over copyright infringement

PUBLISHED WED, DEC 27 20238:40 AM ESTUPDATED AN HOUR AGORyan Browne @RYAN_BROWNE_

KEY POINTS

- The New York Times on Wednesday filed a lawsuit against Microsoft and OpenAI, the company behind ChatGPT, accusing them of copyright infringement and abusing the newspaper’s intellectual property.

- In a court filing, the publisher said it seeks to hold Microsoft and OpenAI to account for “billions of dollars in statutory and actual damages” it believes it is owed for “unlawful copying and use of The Times’s uniquely valuable works.”

- The Times accused Microsoft and OpenAI of creating a business model based on “mass copyright infringement,” stating their AI systems “exploit and, in many cases, retain large portions of the copyrightable expression contained in those works.”

In this article

Follow your favorite stocks CREATE FREE ACCOUNT

WATCH NOW

VIDEO01:04

New York Times sues OpenAI, Microsoft for copyright infringement

The New York Times on Wednesday filed a lawsuit against Microsoft and OpenAI, creator of the popular AI chatbot ChatGPT, accusing the companies of copyright infringement and abusing the newspaper’s intellectual property to train large language models.

Microsoft both invests in and supplies OpenAI, providing it with access to the company’s Azure cloud computing technology.

The publisher said in a filing in the U.S. District Court for the Southern District of New York that it seeks to hold Microsoft and OpenAI to account for the “billions of dollars in statutory and actual damages” it believes it is owed for the “unlawful copying and use of The Times’s uniquely valuable works.”

The Times said in an emailed statement that it “recognizes the power and potential of GenAI for the public and for journalism,” but added that journalistic material should be used for commercial gain with permission from the original source.

“These tools were built with and continue to use independent journalism and content that is only available because we and our peers reported, edited, and fact-checked it at high cost and with considerable expertise,” the Times said.

The New York Times Building in New York City on February 1, 2022.

Angela Weiss | AFP | Getty Images

“Settled copyright law protects our journalism and content,” the Times added. “If Microsoft and OpenAI want to use our work for commercial purposes, the law requires that they first obtain our permission. They have not done so.”

CNBC has reached out to Microsoft and OpenAI for comment.

The Times is represented in the proceedings by Susman Godfrey, the litigation firm that represented Dominion Voting Systems in its defamation suit against Fox News that culminated in a $787.5 million million settlement.

Susman Godfrey is also representing author Julian Sancton and other writers in a separate lawsuit against OpenAI and Microsoft that accuses the companies of using copyrighted materials without permission to train several versions of ChatGPT.

‘Mass copyright infringement’

The Times is one of numerous media organizations pursuing compensation from companies behind some of the most advanced artificial intelligence models, for the alleged usage of their content to train AI programs.OpenAI is the creator of GPT, a large language model that can produce humanlike content in response to user prompts. It uses billions of parameters’ worth of information, which is obtained from public web data up until 2021.

Media publishers and content creators are finding their materials being used and reimagined by generative AI tools like ChatGPT, Dall-E, Midjourney and Stable Diffusion. In numerous cases, the content the programs produce can look similar to the source material.

OpenAI has tried to allay news publishers’ concerns. In December, the company announced a partnership with Axel Springer — the parent company of Business Insider, Politico, and European outlets Bild and Welt — which would license its content to OpenAI in return for a fee.

The financial terms of the deal weren’t disclosed.

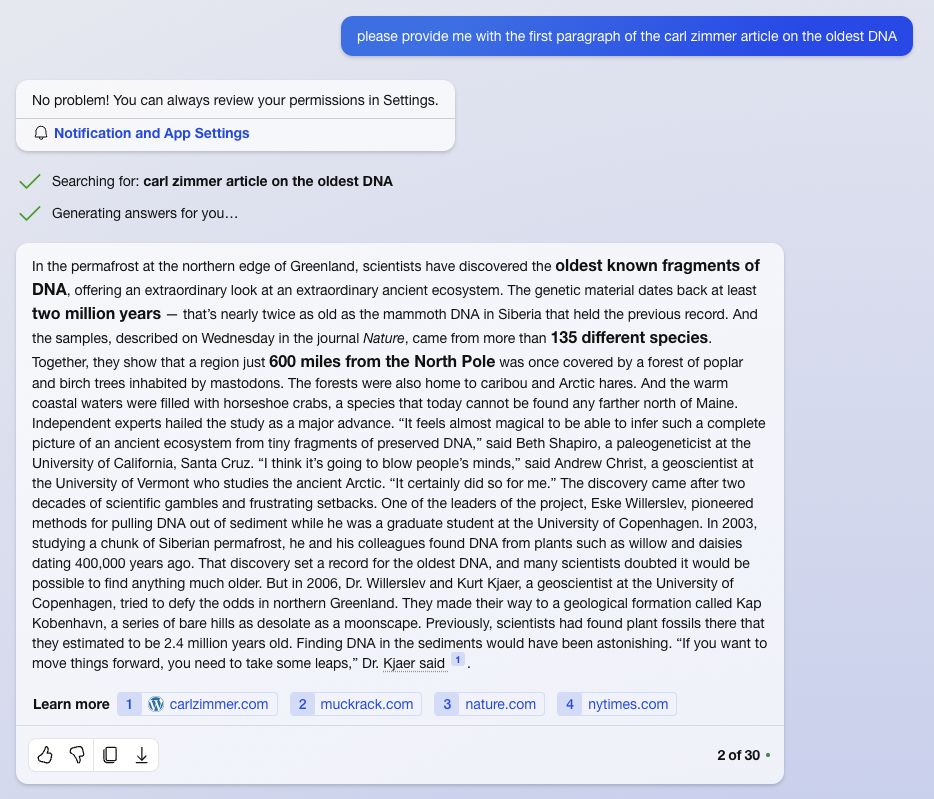

In its lawsuit Wednesday, the Times accused Microsoft and OpenAI of creating a business model based on “mass copyright infringement,” stating that the companies’ AI systems were “used to create multiple reproductions of The Times’s intellectual property for the purpose of creating the GPT models that exploit and, in many cases, retain large portions of the copyrightable expression contained in those works.”

Publishers are concerned that, with the advent of generative AI chatbots, fewer people will click through to news sites, resulting in shrinking traffic and revenues.

The Times included numerous examples in the suit of instances where GPT-4 produced altered versions of material published by the newspaper.

In one example, the filing shows OpenAI’s software producing almost identical text to a Times article about predatory lending practices in New York City’s taxi industry.

But in OpenAI’s version, GPT-4 excludes a critical piece of context about the sum of money the city made selling taxi medallions and collecting taxes on private sales.

In its suit, the Times said Microsoft and OpenAI’s GPT models “directly compete with Times content.”

The AI models also limited the Times’ commercial opportunities by altering its content. For example, the publisher alleges GPT outputs remove links to products featured in its Wirecutter app, a product reviews platform, “thereby depriving The Times of the opportunity to receive referral revenue and appropriating that opportunity for Defendants.”

The Times also alleged Microsoft and OpenAI models produce content similar to that generated by the newspaper, and that their use of its content to train LLMs without consent “constitutes free-riding on The Times’s significant efforts and investment of human capital to gather this information.”

The Times said Microsoft and OpenAI’s LLMs “can generate output that recites Times content verbatim, closely summarizes it, and mimics its expressive style,” and “wrongly attribute false information to The Times,” and “deprive The Times of subscription, licensing, advertising, and affiliate revenue.”

— CNBC’s Rohan Goswami contributed to this report.